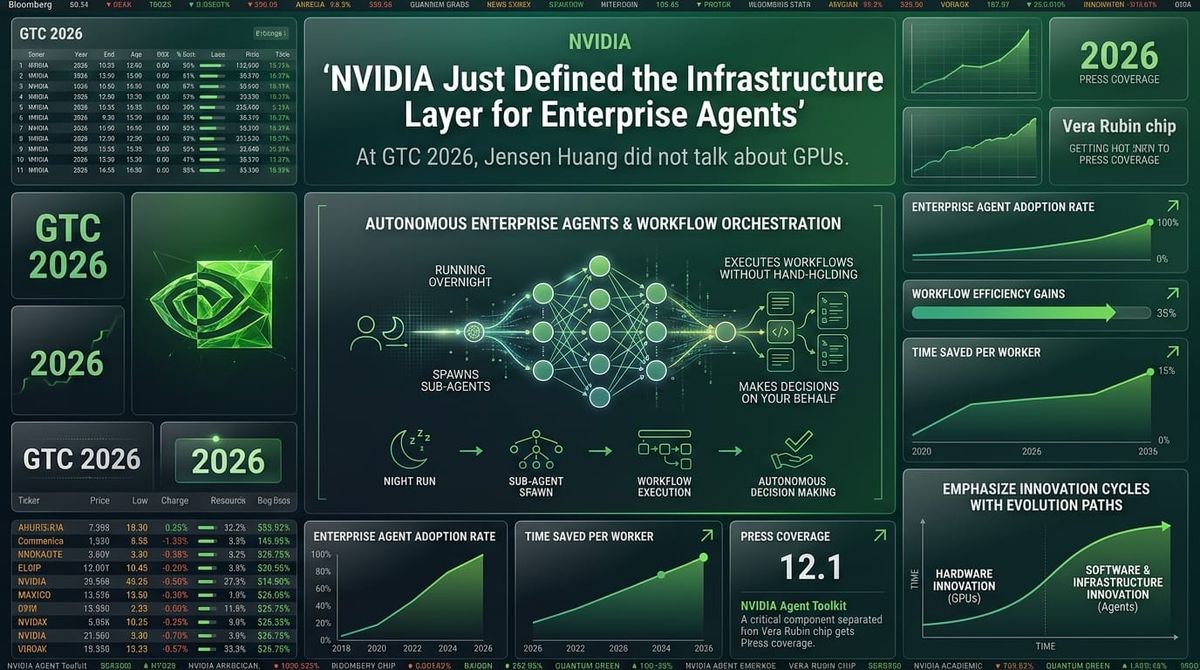

NVIDIA Just Defined the Infrastructure Layer for Enterprise Agents

At GTC 2026, Jensen Huang did not talk about GPUs. He talked about a new computing model. AI agents that run overnight, spawn sub-agents, execute workflows without human hand-holding, and make decisions on your behalf while you sleep. The headline chip — Vera Rubin — got the press coverage. But the announcement that matters most to enterprise software is something called the NVIDIA Agent Toolkit.

This is not a model release. It is a claim on the entire software stack that enterprise agents run on.

What Was Actually Announced

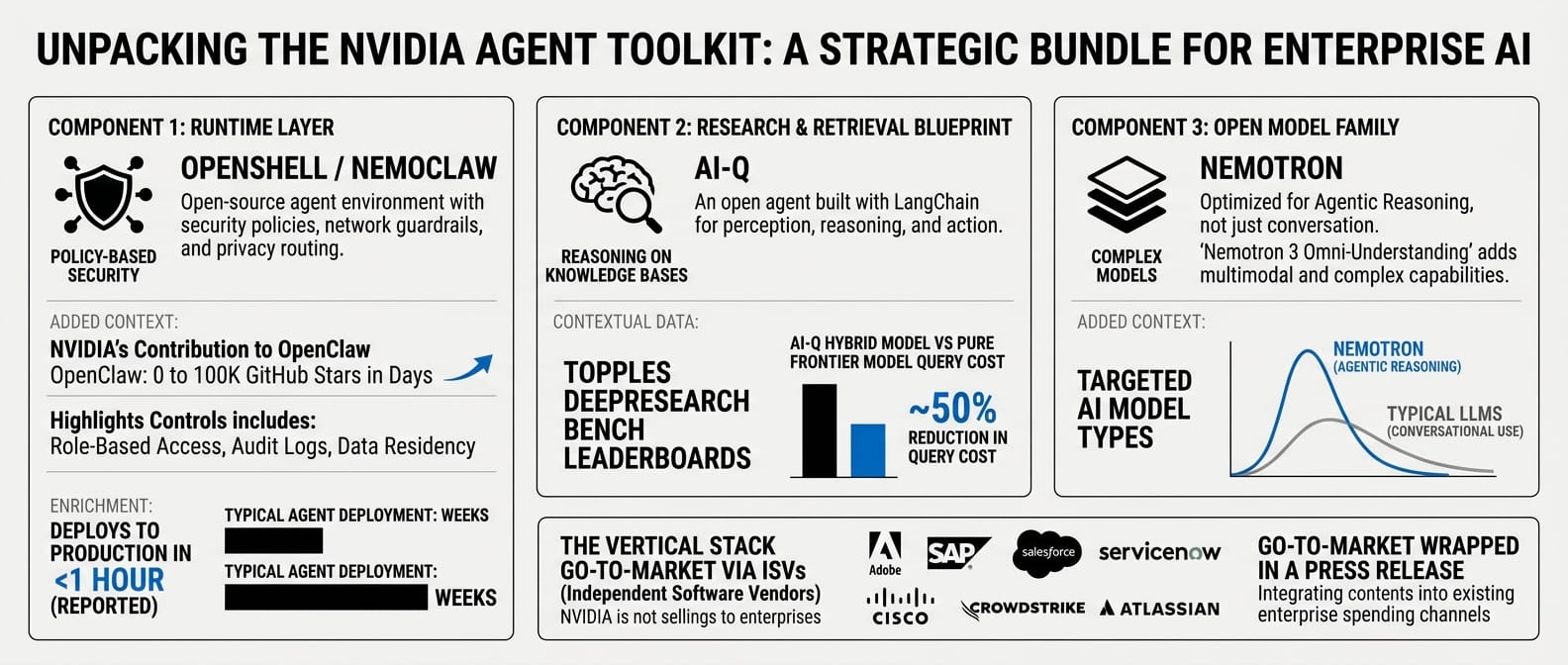

The Agent Toolkit bundles three components that, taken individually, are interesting. Together, they are a vertical stack play.

OpenShell / NemoClaw is the runtime layer — an open-source environment that runs autonomous agents with policy-based security, network guardrails, and privacy routing. NVIDIA positioned it as a contribution to OpenClaw, the open-source agent framework that went from zero to 100,000 GitHub stars in days after launch. NemoClaw wraps OpenClaw in enterprise-grade controls: role-based access, audit logs, data residency enforcement. The pitch is that it runs inside corporate infrastructure, exposes nothing externally, and reportedly gets agents to production in under an hour.

AI-Q is the research and retrieval blueprint — an open agent that sits on top of enterprise knowledge bases and lets agents perceive, reason, and act on internal data. Built with LangChain, it currently tops the DeepResearch Bench leaderboards using a hybrid model approach that reportedly cuts query costs in half versus pure frontier model approaches.

Nemotron is the open model family underneath all of it — optimized specifically for agentic reasoning rather than conversational use. The Nemotron 3 omni-understanding models add multimodal and complex reasoning on top of the base language capability.

The distribution strategy is telling: NVIDIA is not selling this to enterprises directly. It is selling it to the ISVs those enterprises already pay. Adobe, SAP, Salesforce, ServiceNow, Cisco, CrowdStrike, Atlassian, and a dozen others announced integrations at GTC. That is not a partner list. That is a go-to-market wrapped in a press release.

What the Agent-Native Lens Reveals

Most GTC coverage is framing this as "NVIDIA moves into software." That is the wrong frame.

The more interesting question is: what does it mean when the company that makes the compute layer also owns the agent runtime?

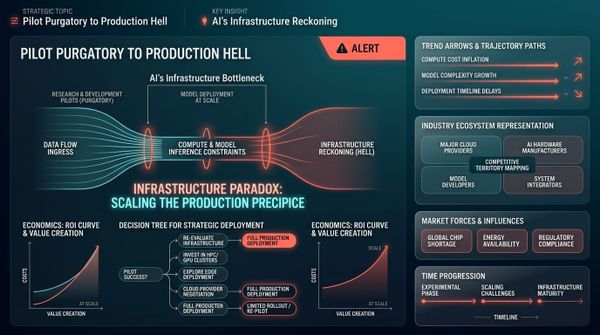

The answer has real implications for the agent-native maturity spectrum. Right now, enterprise software vendors are at very different points in their agent readiness. Some have full CLI and API surfaces that can be configured without touching a GUI. Others remain locked in click-ops, where every workflow change requires a human at a dashboard. NemoClaw and AI-Q do not change that underlying architecture problem — but they do raise the floor for every vendor who adopts them.

When ServiceNow's Autonomous Workforce agents run on NVIDIA Agent Toolkit, and SAP integrates Nemotron into its workflows, those platforms inherit OpenShell's security and governance layer. That is meaningful for enterprises trying to deploy agents without building their own trust infrastructure from scratch.

The analogy Jensen reached for was Windows — a standard environment that makes agent software portable across hardware. That framing is ambitious, and probably self-serving, but it is not wrong as a directional bet. The agent runtime layer is genuinely underdeveloped. Most enterprise teams assembling agent stacks today are stitching together a language model, a retrieval system, an orchestration framework, a security layer, and a runtime from vendors that never designed their products to interoperate. NVIDIA is betting that collapsing that complexity has enough value to justify a platform play.

How It Actually Works

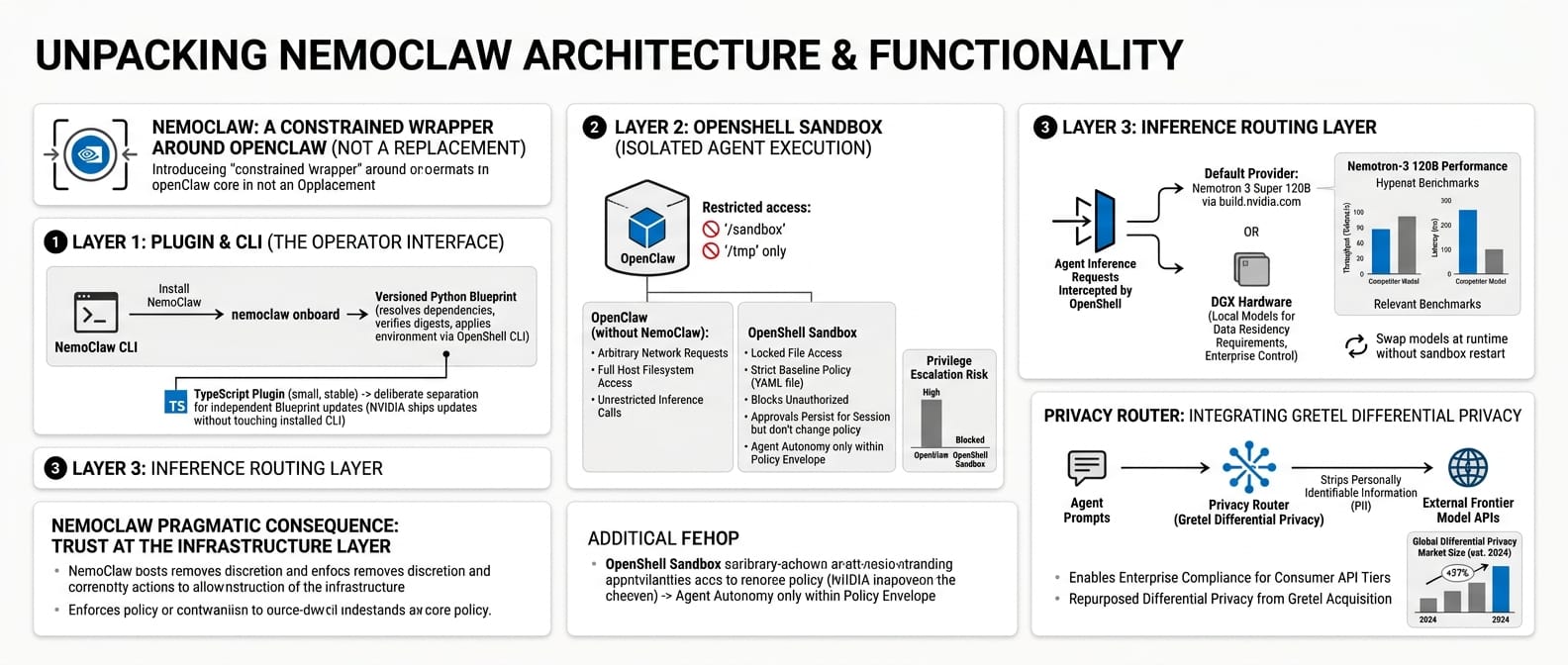

NemoClaw is best understood as a constrained wrapper around OpenClaw, not a replacement for it. The architecture has three distinct layers.

The plugin and CLI are the operator interface. You install NemoClaw, run nemoclaw onboard, and a versioned Python blueprint handles the rest — resolving dependencies, verifying digests, and applying the environment definition through the OpenShell CLI. The separation between the TypeScript plugin (small, stable) and the blueprint (versioned, independently updatable) is deliberate: NVIDIA can ship environment updates without touching the CLI your team already has installed.

The OpenShell sandbox is where the agent actually runs. OpenClaw executes inside an isolated container. File access is locked to /sandbox and /tmp. Network egress starts from a strict baseline policy defined in a YAML file — if the agent tries to reach an endpoint not on the allowlist, OpenShell blocks it and surfaces the request to the operator for approval in the terminal UI. That approval persists for the session but does not modify the baseline policy. The agent cannot escalate privileges or reach arbitrary external services without explicit operator sign-off.

The inference routing layer sits between the agent and the model. Inference requests from the agent never leave the sandbox directly — OpenShell intercepts every call and routes it through a gateway to the configured provider, by default Nemotron 3 Super 120B via build.nvidia.com. You can swap models at runtime without restarting the sandbox. For enterprises with data residency requirements, inference can be routed to local models running on DGX hardware rather than NVIDIA cloud.

The practical consequence of this architecture is that trust is enforced at the infrastructure layer, not delegated to the agent itself. An OpenClaw agent running without NemoClaw can make arbitrary network requests, access the host filesystem, and call any inference endpoint it chooses. NemoClaw removes that discretion. The agent still has autonomy over how it completes tasks — it just cannot reach beyond the policy envelope to do so.

One detail worth flagging: the privacy router inside OpenShell draws on NVIDIA's acquisition of Gretel, a synthetic data company. Its differential privacy technology is repurposed here to strip personally identifiable information from prompts before they are sent to external frontier model APIs. For enterprises without data processing agreements with their LLM providers — which describes most teams using consumer API tiers — this addresses a real compliance gap that typically goes unmanaged.

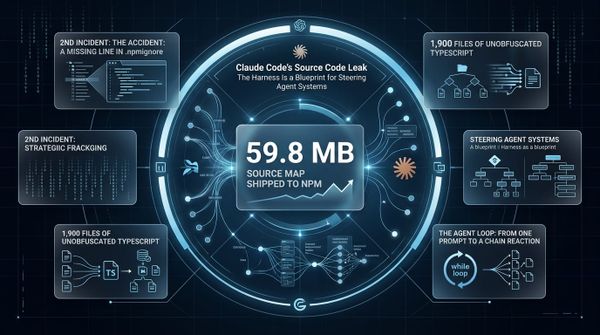

OpenClaw and NemoClaw: The Relationship

To understand what NVIDIA actually shipped, you need to understand OpenClaw first — and the relationship between the two is less obvious than the GTC framing suggests.

OpenClaw was published in November 2025 by Austrian developer Peter Steinberger, initially under the name "Clawdbot." It went viral in January 2026, reaching 100,000 GitHub stars and 2 million visitors in its first week — reportedly the fastest-growing open-source repository in GitHub history. The core concept is persistent autonomous agents, or "claws," that can navigate file systems, write and execute code, spawn sub-agents, retain state across sessions, connect to external tools, and work continuously without human supervision. OpenAI hired Steinberger in February 2026, though he retains involvement with the project, which remains genuinely independent and open-source.

The security problems OpenClaw created were not hypothetical. Malicious "skills" targeting crypto users appeared on ClawHub, the community skills marketplace. At least one widely reported incident involved an agent deleting emails in a user's personal inbox despite explicit instructions not to take unsanctioned actions. These are not edge cases — they are predictable failure modes of any system that gives software unconstrained access to a host environment.

NemoClaw does not replace OpenClaw. It contains it. The full OpenClaw runtime runs inside NemoClaw's sandbox, with the same capabilities intact. What changes is the execution context. OpenClaw without NemoClaw runs with whatever permissions the host user has — which in practice means it can reach everything. NemoClaw wraps that in a container with an explicit allowlist for network endpoints, filesystem paths locked to /sandbox and /tmp, inference calls intercepted and routed through a gateway, and syscall restrictions that prevent privilege escalation.

The distinction matters for enterprise evaluation: you are not choosing between OpenClaw and NemoClaw as competing agent frameworks. You are choosing whether to run OpenClaw in an uncontrolled environment or a policy-governed one. For personal experimentation, the former is fine. For any deployment that touches business data, customer information, or production systems, the latter is the only viable option.

OpenShell is also designed to work beneath other coding agents beyond OpenClaw — it is not a proprietary wrapper for a single product. That is central to NVIDIA's ISV positioning: the runtime as infrastructure that any agent framework can adopt. Whether that plays out in practice depends on whether other agent frameworks see value in adopting NVIDIA's security layer or build their own. That competitive dynamic is still unresolved.

What Enterprises Are Actually Building With It

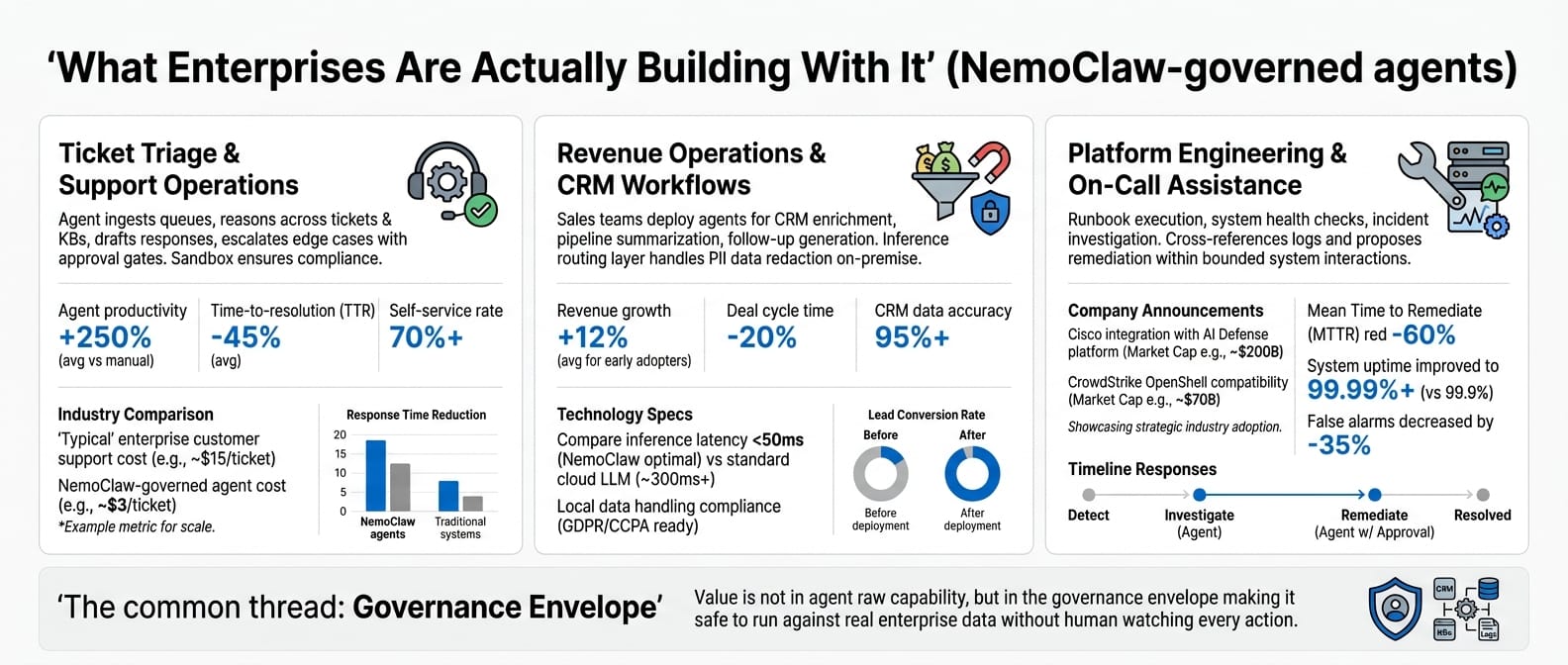

The use cases breaking through in early enterprise adoption cluster around three patterns.

Ticket triage and support operations is the most common starting point. Agents ingest support queues, reason across historical tickets and knowledge bases, draft responses, and escalate edge cases to humans — with approval gates inserted before any sensitive action like modifying account records or initiating refunds. The sandbox ensures the agent cannot reach systems outside the defined policy envelope while it works.

Revenue operations and CRM workflows are the second pattern. Sales teams are deploying NemoClaw-governed agents for CRM enrichment, pipeline summarization, and follow-up generation — tasks that require reading and writing CRM data, querying external sources, and generating communications, all of which benefit from explicit network and data access controls. The inference routing layer matters here because CRM data frequently contains personally identifiable information that should not leave the corporate network unredacted.

Platform engineering and on-call assistance is the third. Runbook execution, system health checks, and on-call agent support — where an agent can investigate an incident, cross-reference logs, and propose remediation steps within a bounded set of allowed system interactions. Cisco's announced integration with their AI Defense platform and CrowdStrike's OpenShell compatibility work both point at exactly this pattern.

The common thread across all three is that the value is not in the agent's raw capability — OpenClaw already had that. The value is in the governance envelope that makes it safe to run the agent against real enterprise data without a human watching every action.

What to Watch

Three things will determine whether NVIDIA's agent stack claim holds up over the next 12 months.

First, whether the ISV adoptions are real integrations or press-release partnerships. SAP and Salesforce announcing at GTC is not the same as enterprise customers running production workflows on Nemotron. The proof will be in deployment data, not keynote slides.

Second, whether OpenShell's governance model is sufficient for regulated industries. The Futurum analysts made the point clearly: NemoClaw is a necessary component of agent trust, not a complete solution. Healthcare, financial services, and regulated manufacturing will need more than a privacy router and RBAC before they route sensitive data through an agent runtime they do not fully control.

Third, whether the hardware lock-in question resolves cleanly. The open-source framing for OpenShell and NemoClaw is genuine — you can inspect the code, run it on non-NVIDIA hardware, contribute back. But an open-source agent stack that is optimized for NVIDIA inference chips creates a gravitational pull toward NVIDIA infrastructure over time. That is not a trap, but enterprise architects should plan for it deliberately rather than discover it two years into deployment.

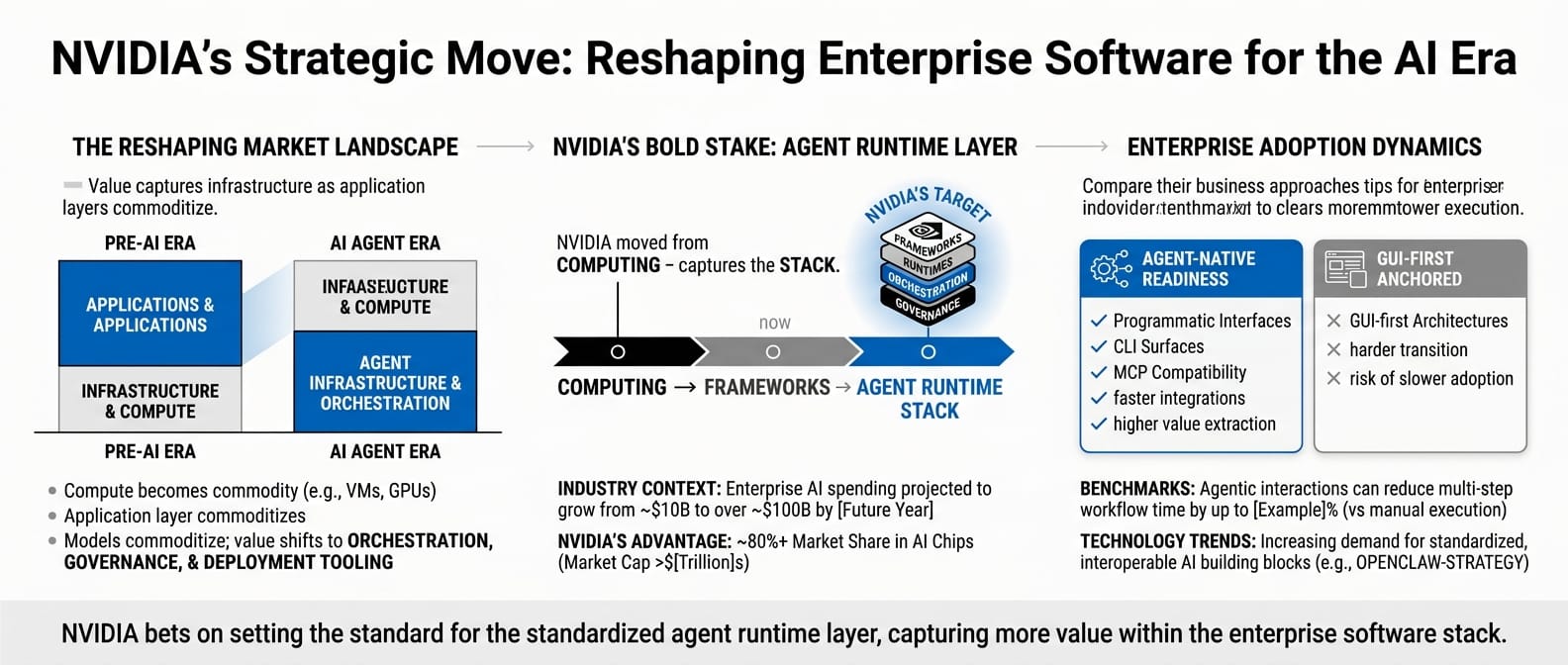

The Bigger Picture

NVIDIA's move here is coherent with a broader pattern that is reshaping enterprise software. The infrastructure layer captures more value as the application layer commoditizes. Compute became a commodity, so NVIDIA moved up to frameworks and runtimes. Models are commoditizing, so the value shifts to orchestration, governance, and deployment tooling.

The enterprise software vendors who are already agent-native — who have invested in programmatic interfaces, CLI surfaces, and MCP compatibility — will integrate with this stack faster and extract more value from it. The ones still anchored in GUI-first architectures will face a harder transition.

Jensen Huang said at GTC that every company needs an OpenClaw strategy. That is marketing language. The real statement underneath it is this: the agent runtime layer is being standardized, and whoever sets the standard captures the stack.

NVIDIA just placed a very large bet on being that whoever

.