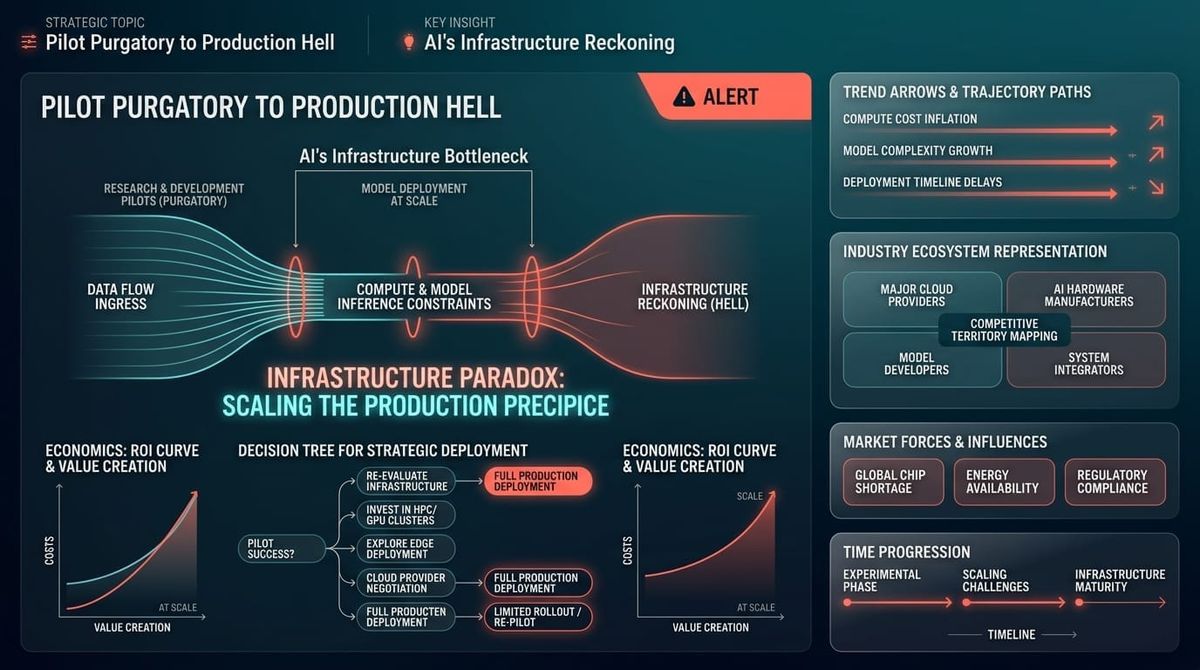

Pilot Purgatory to Production Hell: AI's Infrastructure Reckoning

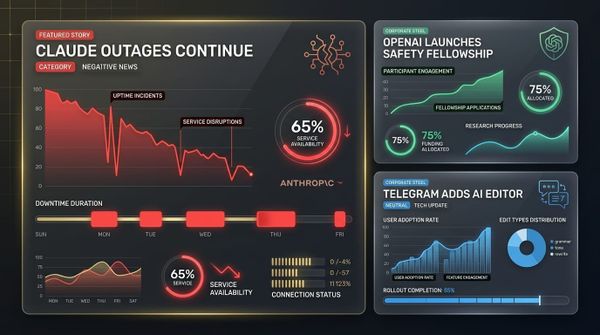

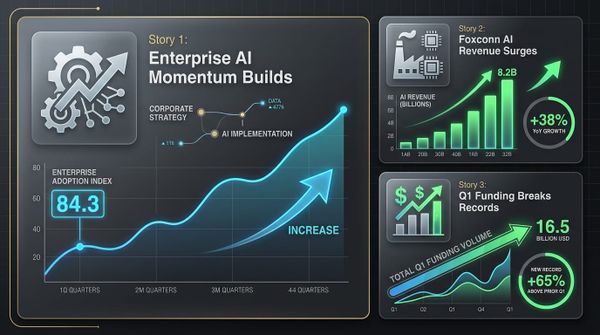

The enterprise AI gold rush just hit a wall. While Q1 2026 shattered all funding records with $300 billion flowing to AI startups, the infrastructure reality tells a different story. Claude suffered back-to-back outages. ServiceNow proved AI monetization works with a $1 billion run rate, but most companies remain stuck in "Pilot Purgatory." OpenAI bought a media company to control narratives as public skepticism grows. The pattern is clear: AI's scaling success is exposing fundamental gaps in reliability, governance, and business models that could derail the entire enterprise adoption curve.

This isn't a typical growing pains story. This is what happens when venture capital enthusiasm collides with operational reality. The companies solving these infrastructure problems now will own the next wave of enterprise AI. Those ignoring them will watch their pilots fail to scale.

The Story

The Setup

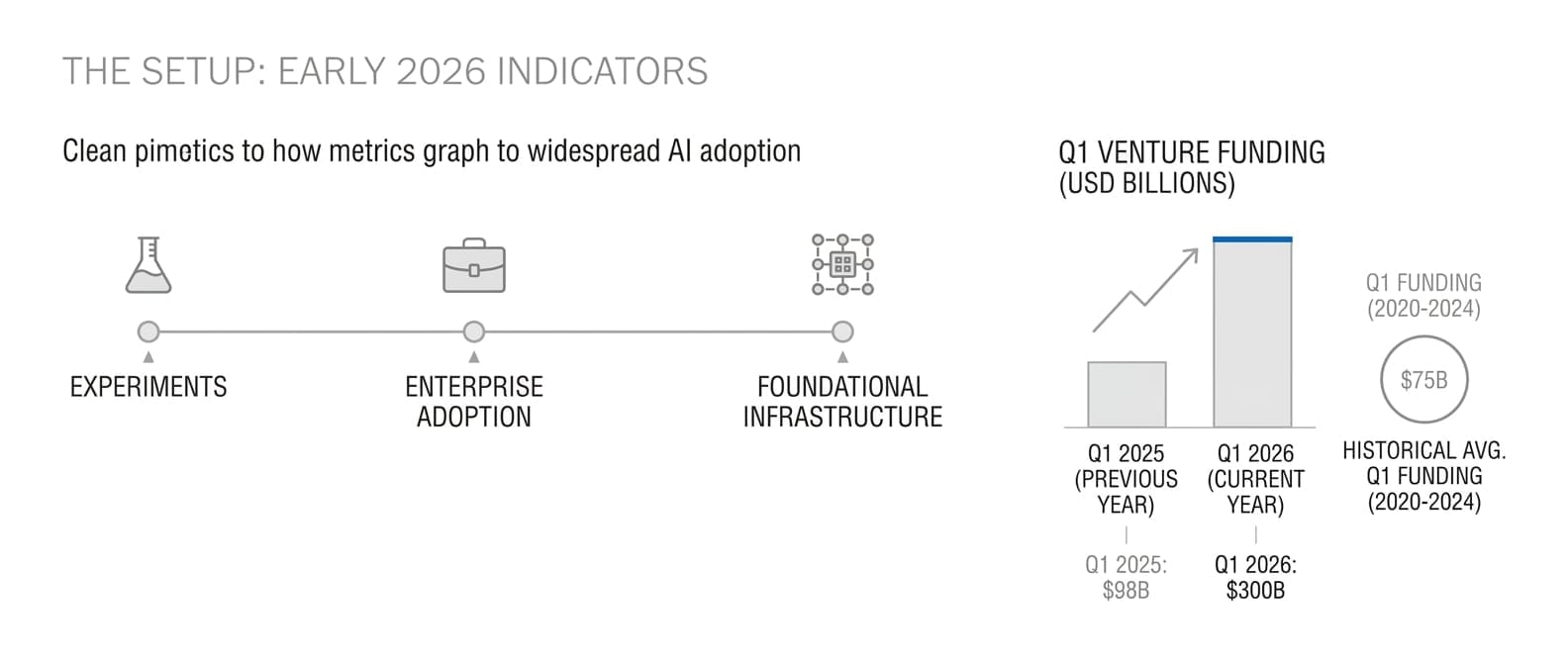

The narrative through early 2026 was triumphant: AI had crossed the enterprise chasm. Models were getting better, costs were dropping, and Fortune 500 companies were moving beyond experiments. Q1 venture funding of $300 billion seemed to validate that AI was no longer experimental technology but foundational infrastructure. Enterprise buyers believed the hard problems were solved.

The Shift

Then reality hit. Claude's consecutive outages exposed how fragile AI infrastructure remains as demand scales. Microsoft launched three proprietary models specifically to reduce dependence on OpenAI—a clear signal that even partnerships with market leaders aren't reliable enough for enterprise workloads. OpenAI's acquisition of a tech media company revealed growing anxiety about public perception, while dissolving internal safety teams in favor of external researchers showed governance gaps at the industry's flagship company.

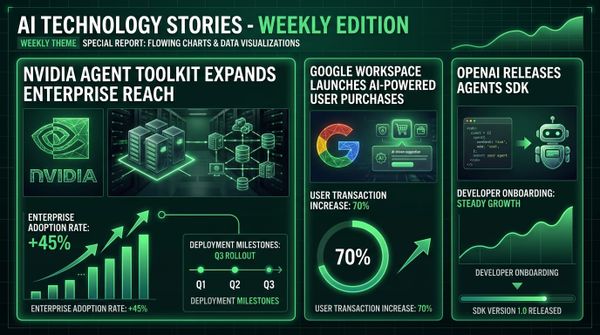

Meanwhile, ServiceNow demonstrated what works: their Now Assist platform reached $1 billion run rate faster than any product in company history by solving specific workflow problems with measurable ROI. But NVIDIA's Agent Toolkit signing 17 enterprise partners reveals the real game—platform control. This isn't about models anymore. It's about who owns the layer between AI capabilities and business applications.

The Pattern

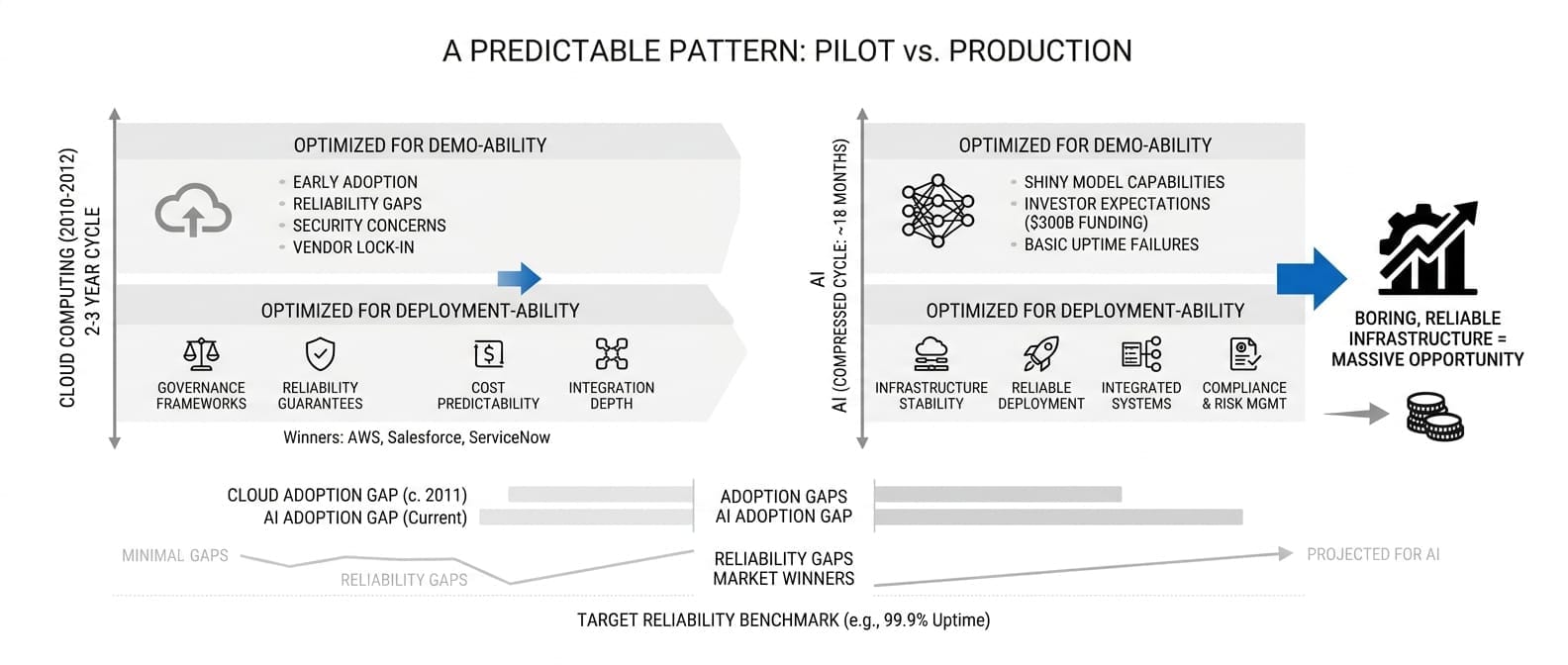

We've seen this movie before. Cloud computing went through identical growing pains around 2010-2012 when early adoption exposed reliability gaps, security concerns, and vendor lock-in risks. The companies that solved infrastructure problems—AWS, Salesforce, ServiceNow—became the winners. Those that ignored them became footnotes.

AI is following the exact same playbook, but compressed into an 18-month cycle instead of five years. The gap between investor expectations ($300B in funding) and operational reality (basic uptime failures) creates massive opportunity for companies that can deliver boring, reliable infrastructure while everyone else chases shiny model capabilities.

The shift from pilot to production requires different capabilities: governance frameworks, reliability guarantees, cost predictability, and integration depth. Most AI companies optimized for demo-ability, not deployment-ability.

The Stakes

The window for fixing these problems is shrinking fast. Enterprise IT buyers are moving from curiosity spending to disciplined procurement. If your AI initiative can't show measurable business value by Q3 2026, budgets get cut. If your vendor can't guarantee uptime and governance controls, contracts don't get renewed.

The companies that crack the infrastructure code will capture disproportionate value as enterprise AI moves from experimental to operational. Those that don't will find themselves competing on commodity model capabilities in an increasingly crowded market.

What This Means For You

For CTOs

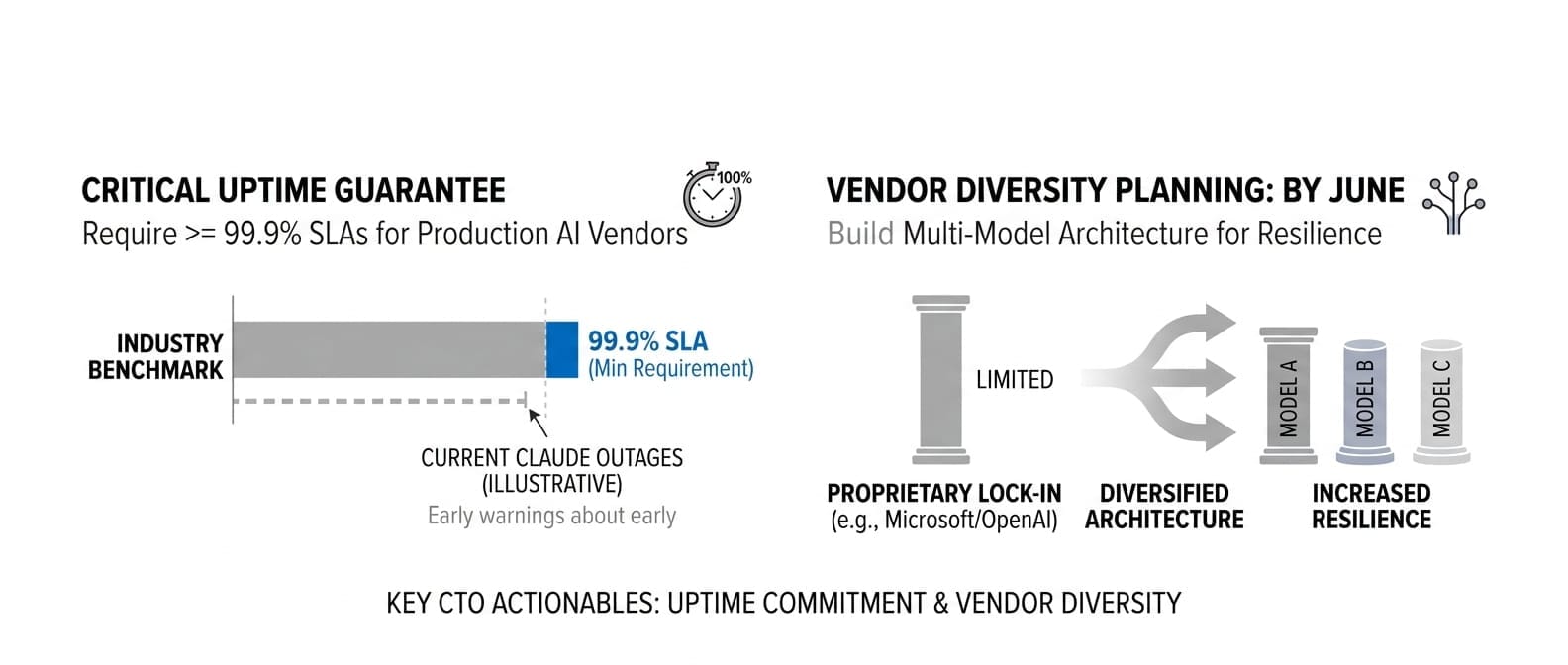

- Demand uptime guarantees now: Any AI vendor without 99.9% SLA commitments shouldn't be in production workflows. Claude's outages are early warnings, not isolated incidents.

- Build vendor diversity by June: Microsoft's proprietary models show even OpenAI partnerships aren't bulletproof. Plan for multi-model architectures before you're locked in.

- Establish AI governance frameworks by Q3: ServiceNow's success comes from solving workflow problems, not model capabilities. Focus on integration and control, not raw performance.

For AI Product Leaders

- Shift metrics from model performance to business outcomes: ServiceNow's $1B run rate proves enterprises pay premiums for measurable ROI, not benchmark scores.

- Target vertical-specific use cases: IFS's asset-based pricing model shows how to escape per-seat limitations. Industry-specific solutions command higher margins.

- Plan for the reliability tax: Google's TurboQuant algorithm addresses infrastructure efficiency while competitors struggle with basic uptime. Technical optimization becomes competitive advantage.

For Engineering Leaders

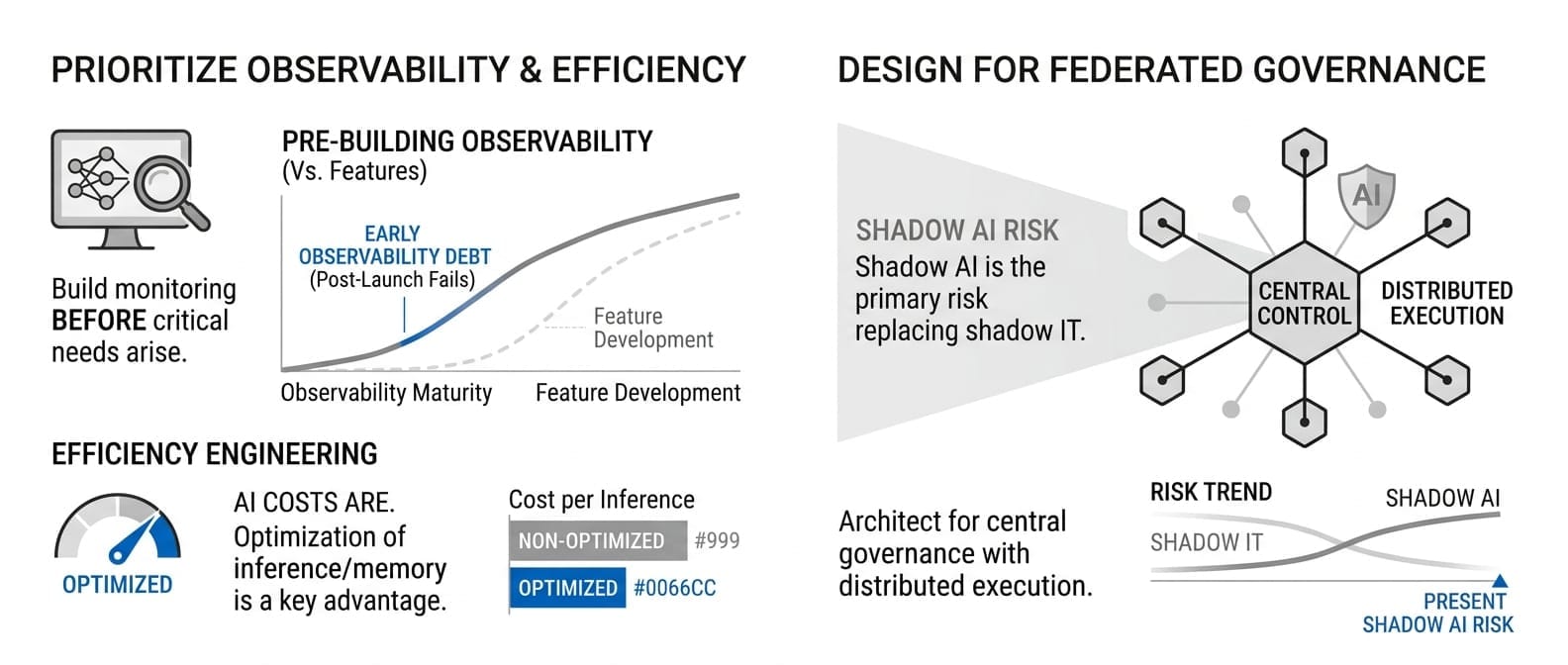

- Prioritize observability over features: AI systems fail differently than traditional software. Build monitoring and debugging capabilities before they're critical.

- Design for federated AI governance: Shadow AI is replacing shadow IT as the primary risk. Architect for central control with distributed execution.

- Invest in efficiency engineering: With AI costs unpredictable, the teams that can optimize inference and memory usage will have significant advantages.

What We're Watching

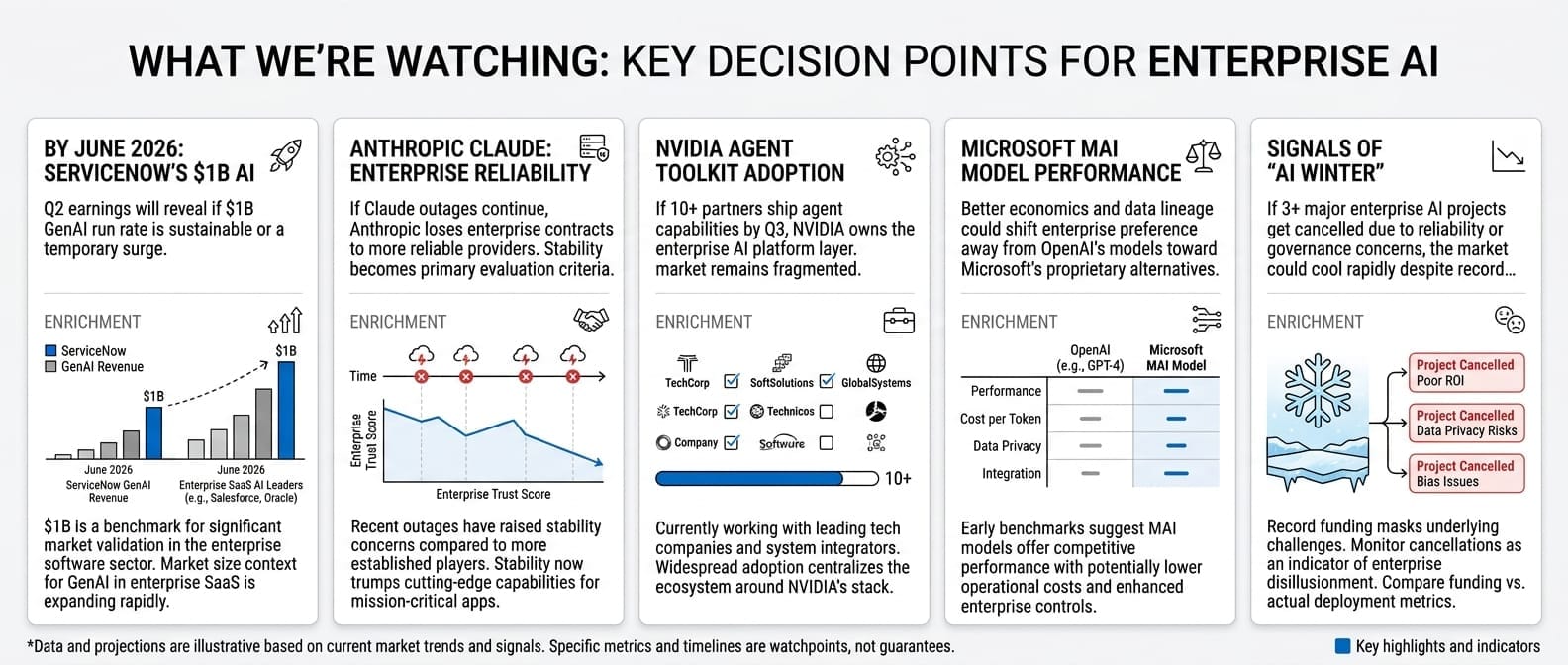

- By June 2026: ServiceNow's Q2 earnings will reveal whether $1B run rate is sustainable or a temporary surge. This determines AI monetization viability across enterprise software.

- If Claude outages continue: Anthropic loses enterprise contracts to more reliable providers. Stability becomes the primary vendor evaluation criteria.

- NVIDIA's Agent Toolkit adoption: If 10+ partners ship agent capabilities by Q3, NVIDIA owns the enterprise AI platform layer. If not, the market remains fragmented.

- Microsoft's MAI model performance: Better economics and data lineage could shift enterprise preference away from OpenAI's models toward Microsoft's proprietary alternatives.

- Watch for "AI winter" signals: If 3+ major enterprise AI projects get cancelled due to reliability or governance concerns, the market could cool rapidly despite record funding.

The Bottom Line

April 8, 2026 marks the day AI's infrastructure problem became undeniable. While everyone obsesses over model capabilities, the real money flows to companies solving reliability, governance, and integration challenges. The boring infrastructure plays—monitoring, observability, cost optimization, governance frameworks—will generate more enterprise value than the next breakthrough model.

The question isn't whether your AI is smart enough. It's whether your AI infrastructure is enterprise-ready. Most companies will learn this lesson the expensive way when pilots refuse to scale. The smart money is already building for the infrastructure reckoning. Which side are you on?