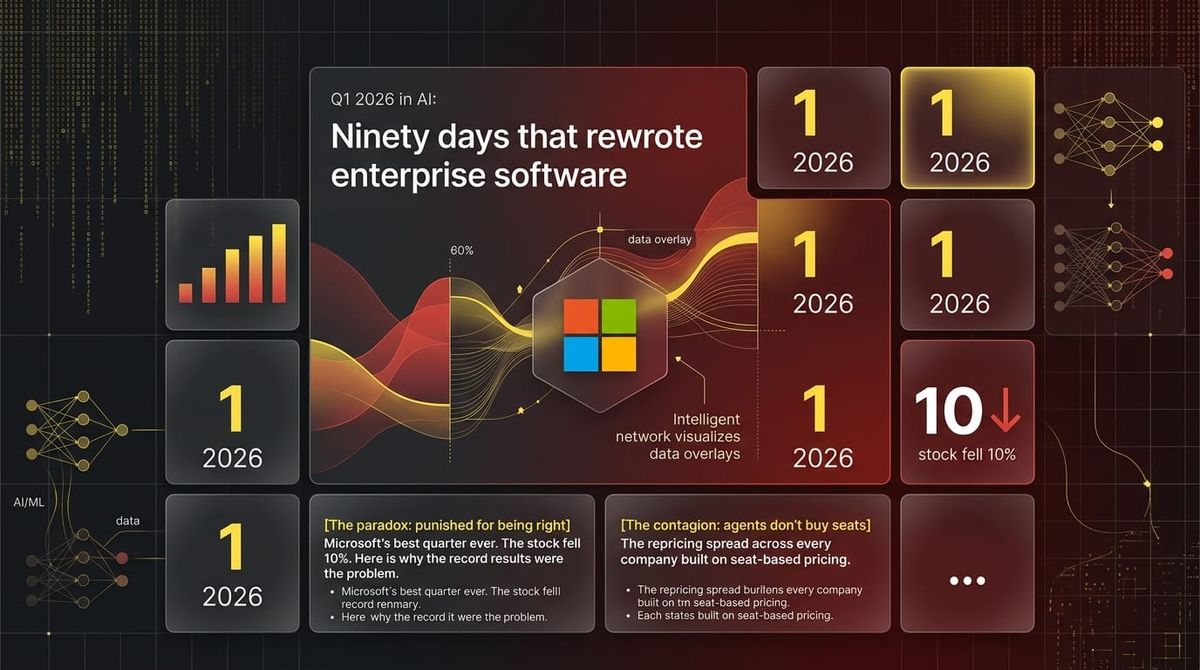

Q1 2026 in AI: Ninety days that rewrote enterprise software

In this article:

- The paradox: punished for being right

Microsoft's best quarter ever. The stock fell 10%. Here is why the record results were the problem. - The contagion: agents don't buy seats

The repricing spread across every company built on seat-based pricing. The replacement model is already running. - The sovereign signal: Singapore's bet

GIC and Qatar led a $30B round in Anthropic. Infrastructure investors don't fund software startups. Unless they've decided it's infrastructure. - The eastern challenge: two surprises from China

DeepSeek V4 on Chinese chips. Alibaba down 67% net income, stock up 19% in a day. The gap is closing faster than Q1 2025 suggested. - The new map: five bets on five different futures

Five deals that show where serious money decided the durable moats are. - The vertical wave: every sector found its Harvey

Legal, healthcare, cybersecurity, industrial: every major enterprise vertical raised nine-figure vertical AI rounds in the same 90 days. - What Q2 has to answer

Six variables that will show whether Q1's infrastructure bet converts to revenue, or proves premature.

It is 5am in Singapore. January 29, 2026. Microsoft earnings dropped overnight.

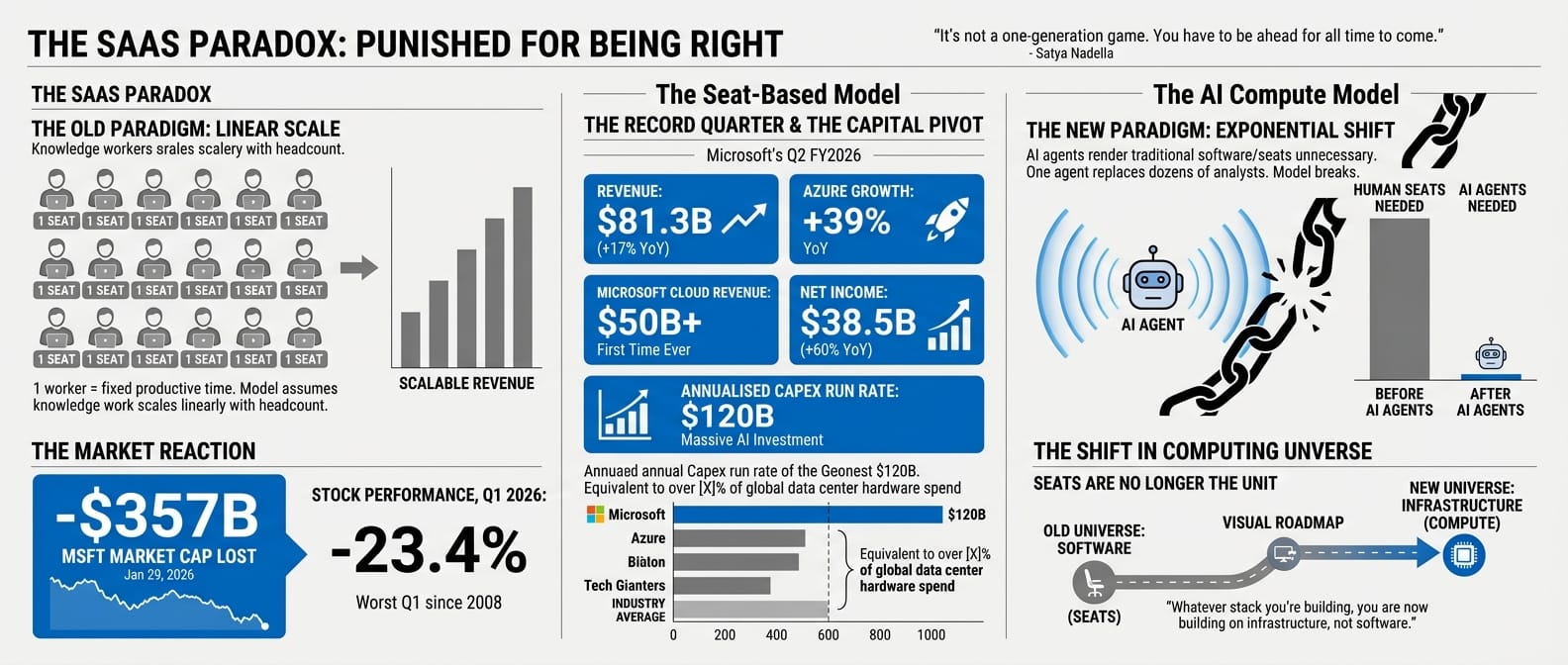

The numbers are the best in the company's history. Revenue: $81.3 billion. Azure up 39%. Net income up 60%. Cloud crossed $50 billion for the first time. EPS beat consensus by $0.17.

MSFT is down 10%. $357 billion in market cap gone in a single session.

That session was the first signal of a quarter that would not let up. A Middle East war sent oil from $65 to $115. DeepSeek released V4 on Lunar New Year (February 17, 2026), running on Chinese chips, closing the gap on GPT-5. Alibaba reported a 67% drop in net income and its stock gained 19% in a single day. The Magnificent Seven lost $2 trillion. Memory chip makers quietly gained. Sovereign wealth funds from Singapore and Qatar led a $30 billion round in Anthropic at $380 billion, treating a foundation model the way they treat a port or a power grid.

Three forces (structural, geopolitical, eastern) compressed into ninety days. Each one would have defined a significant quarter on its own. Together they pointed in the same direction: the software model that built enterprise for twenty years is being replaced, and the practitioners who understand the transition have a decision advantage over the ones still reading quarterly results as if they were normal.

This is that transition, told from the ground.

The paradox: punished for being right

To understand why Microsoft got punished for its best quarter ever, you have to understand what the SaaS business model was built on, and what disappears when that foundation is removed.

For twenty years, enterprise software companies sold seats. One seat equalled one knowledge worker. One knowledge worker equalled a fixed quantity of productive time. Scale the workforce, scale the revenue. It was a clean, logical relationship built on one assumption: that knowledge work scales linearly with headcount.

On the same call where Satya Nadella announced record results, he broke that assumption. Microsoft's AI business had grown into one of the company's largest franchises in under two years. "We are only at the beginning phases of AI diffusion," Nadella told analysts. The company's annualised capex run rate had reached $120 billion. Amy Hood, the CFO, tried to frame this carefully: "Much of the capital we're spending today is already contracted for most of its useful life." Nadella went further: "It's not a one-generation game. You have to be ahead for all time to come."

If agents are the new apps (and Microsoft was making that case with the strongest possible evidence), then seats are not the unit anymore. A company that replaces a hundred analysts with agents does not need a hundred analyst seats. It needs compute. The seat-based model does not bend to accommodate this. It breaks.

The market did not need Microsoft to report bad results to understand this. The record results were the problem. They proved Azure could deliver the compute that would render the software built on top of Azure unnecessary. Investors were not punishing Microsoft for failing. They were punishing it for being right.

| Microsoft Q2 FY2026, January 28 | |

|---|---|

| Revenue | $81.3B (+17% YoY) |

| Azure growth (YoY) | +39% |

| Microsoft Cloud revenue | $50B+ (first time ever) |

| Net income | $38.5B (+60% YoY) |

| Non-GAAP EPS | $4.14 (vs $3.97 consensus) |

| Annualised capex run rate | $120B |

| MSFT market cap lost, Jan 29 | -$357B (single session) |

| MSFT stock, Q1 2026 | -23.4% (worst Q1 since 2008) |

Whatever stack you're building, you are now building on infrastructure, not software. The question is which infrastructure.

The contagion: agents don't buy seats and the replacement is already here

When a business model breaks, it does not break in one place. It breaks everywhere the model was applied.

Over the following three weeks, the repricing spread across every company that had built on seat-based pricing. ServiceNow fell 20% from its January highs. Atlassian dropped 39% year-to-date by early 2026, including a 10% single-week decline in the week ending March 12 as layoff announcements compounded the AI displacement narrative. CrowdStrike dropped 9.4% in a single session on February 23, not because of anything specific to CrowdStrike, but because it shared a sector with companies the market had decided were structurally threatened. Salesforce, Adobe, Workday, the category broadly: down. Productivity software stocks averaged 17.8% declines across the worst month. Nearly $1 trillion in enterprise software market cap was erased.

| SaaSpocalypse, peak week February 23 | |

|---|---|

| ServiceNow (NOW) drop from January highs | -20% |

| Atlassian (TEAM) year-to-date decline | -39% |

| Atlassian (TEAM) single-week drop, March 12 | -10% |

| CrowdStrike (CRWD) in single session | -9.4% |

| Productivity software average, worst month | -17.8% |

| Enterprise software market cap erased | ~$1 trillion |

| Named cause | OpenAI Frontier platform: autonomous enterprise agents |

You look at your own stack. How much of it is priced per seat? How much of that pricing survives a world where one agent replaces five analysts?

The repricing moved beyond software on February 18. A company called Altruist launched Hazel, an autonomous AI platform for tax and estate planning that needed no human adviser. In one session: Charles Schwab fell 7%, Raymond James fell 8%, LPL Financial fell 8%. Capital moved hard toward companies with AI-defensibility. The infrastructure layer. The picks-and-shovels suppliers.

| AI Scare Trade, February 18 | |

|---|---|

| Charles Schwab (SCHW) | -7% |

| Raymond James (RJF) | -8% |

| LPL Financial (LPLA) | -8% |

| Trigger | Altruist "Hazel" autonomous financial planning AI |

The deeper story was not the stock moves. It was the replacement pricing model already forming behind them.

Salesforce launched three separate pricing structures for Agentforce simultaneously: $2 per conversation, $0.10 per action via Flex Credits, or $125 per user per month. Three models at once, because nobody had agreed yet on how to price AI that could replace entire job functions. Zendesk moved to $1.50 per resolved ticket. Intercom Fin to $0.99 per resolution. Sierra AI went purest: pay only when the agent succeeds, nothing when it fails. Sierra reached $150 million in ARR within 21 months on that model alone.

Bret Taylor, Sierra's CEO, put it plainly in a January 2026 interview with SaaStr: "The whole market is going to go towards outcomes-based pricing. It's just so obviously the correct way."

| From seats to outcomes | Old model | New model |

|---|---|---|

| Salesforce Agentforce | Per user seat | $0.10 per action / $2 per conversation |

| Zendesk AI | Per agent seat | $1.50 per resolved ticket |

| Intercom Fin | Per seat | $0.99 per resolution |

| Sierra AI | N/A (AI-native) | Pay per successful resolution only |

The seat-based model was not just being repriced. It was being replaced by a contract that had never existed in enterprise software before: you pay for what the agent does, not for access to the tool. Every company in the SaaSpocalypse was now asking the same question: can our product deliver outcomes worth paying per-resolution for? The ones that could not answer yes were the ones the market was selling.

The stock moves were the market's signal. The layoff announcements were the companies' own signal. They all said the same thing.

Block cut 40% of its workforce in February, citing AI automation as the primary driver. Meta announced 20% workforce reductions in March to fund its AI infrastructure build. Atlassian cut 1,600 people the same week, with the same stated rationale. Oracle began reducing 20,000 to 30,000 roles to reallocate capital to AI infrastructure. Four large technology companies, across a four-week window, announcing significant headcount reductions and citing the same cause.

The pattern has a structure. The seat-based model dies when you replace knowledge workers with agents. When you replace knowledge workers, you reduce headcount. When you reduce headcount, you free cash to fund the infrastructure that runs the agents. The companies cutting hardest were funding the transition themselves. From their own payroll.

The leap of faith: cost before return

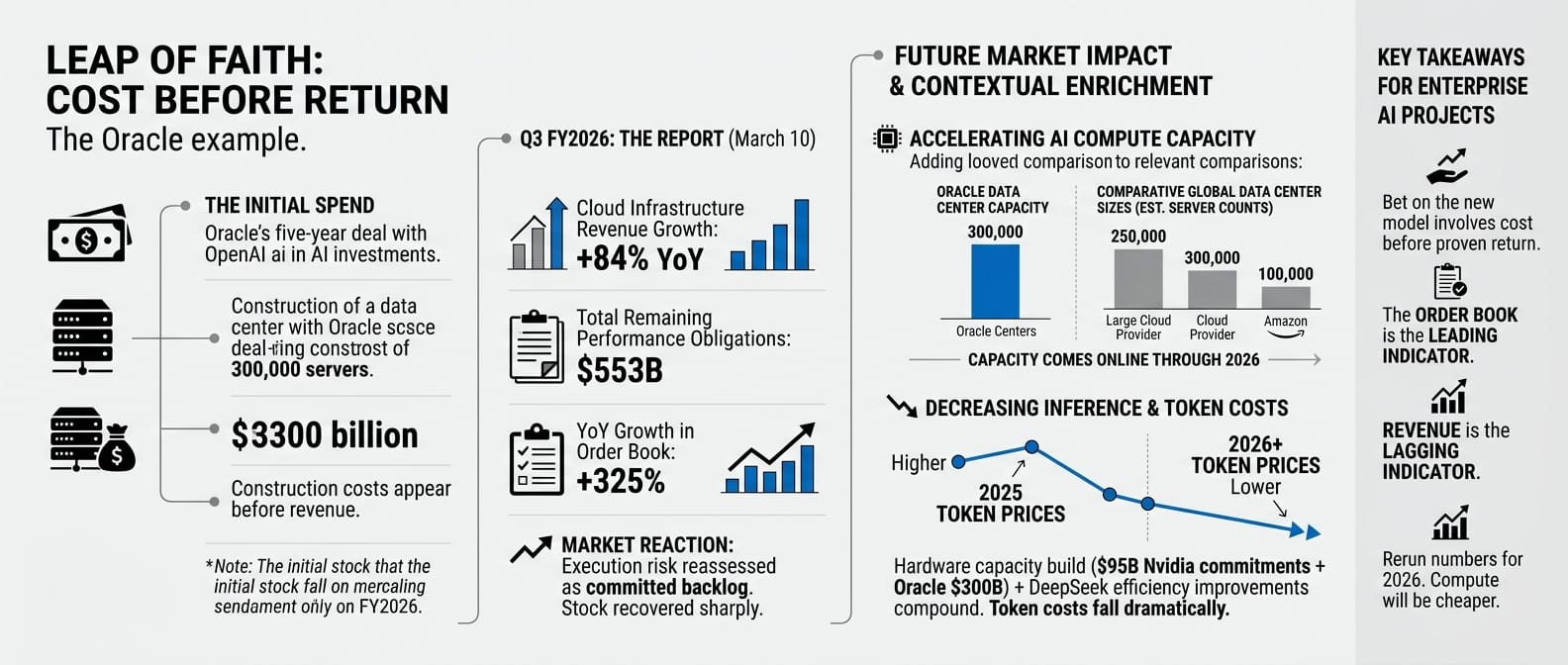

Here is the hardest part of the transition, and Oracle illustrated it plainly.

Oracle had committed to a $300 billion, five-year deal with OpenAI: a data centre build of 300,000 servers. The construction cost showed up in results before a dollar of OpenAI revenue had arrived. The stock fell more than 30% from its highs heading into the quarter.

You recognise this. It is the same dynamic playing out in your own AI project budgets: the spend is real and visible today. The return is contractual and delayed. The question every CFO is asking is whether you trust the order book.

On March 10, Oracle reported. Cloud infrastructure revenue was up 84% year-on-year. The forward order book had reached $553 billion, up 325% from the prior year. The stock recovered sharply. What the market had read as execution risk was now readable as a committed backlog. The cost was real. The return was contracted and coming.

| Oracle Q3 FY2026, March 10 | |

|---|---|

| Cloud infrastructure revenue growth | +84% YoY |

| Total remaining performance obligations | $553B |

| YoY growth in order book | +325% |

| Stock vs Q1 low | Sharp recovery |

This pattern will repeat in Q2, for Oracle and for you. Companies betting on the new model before it is proven show cost before return. The order book is the leading indicator. The revenue is the lagging one. The market punished the cost. The backlog showed the return.

The capacity being built has a direct consequence for inference costs. The $95 billion in Nvidia supply commitments and the $300 billion Oracle build are coming online through 2026. When they do, token costs fall. DeepSeek's efficiency improvements on the software side compound with the hardware build on the infrastructure side. Teams that modelled unit economics based on 2025 token prices should rerun the numbers. The compute that felt expensive in Q1 will be cheaper by Q4.

The sovereign signal: Singapore's bet

While the public markets repriced downward, sovereign capital made the opposite move.

On February 12, Anthropic announced a $30 billion Series G. The lead investors were GIC, Singapore's sovereign wealth fund, and the Qatar Investment Authority. Both are infrastructure investors. They build ports and power grids. They manage endowments across decades. They do not invest in software startups at $380 billion valuations.

Except they did. And that told you everything about how Singapore and the Middle East had decided to categorise foundation models: not software, infrastructure. The same category as a power grid or a shipping terminal. For practitioners in Southeast Asia, this was not abstract. The fund that manages Singapore's national reserves had just made an infrastructure bet on the AI layer that your production workloads run on.

| Anthropic Series G, February 12 | |

|---|---|

| Round size | $30 billion |

| Valuation: before / after | $183B → $380B |

| Lead investors | GIC (Singapore SWF), QIA (Qatar SWF) |

| ARR at close | ~$14B, growing 10x annually for 3 years |

| Also in round | Microsoft, Nvidia |

The decision was not made on hype. A fund that manages Singapore's long-term national reserves does not chase momentum. It prices structural necessity. GIC had looked at Claude's $14 billion ARR growing 10x annually for three consecutive years and made the same call it makes about ports and transmission lines: this is permanent infrastructure, and the cost of not owning it is higher than the cost of the bet.

On February 25, Jensen Huang made the infrastructure case explicit on Nvidia's earnings call. "In this new world of AI, compute equals revenues. Without compute, there's no way to generate tokens. Without tokens, there's no way to grow revenues." His CFO added that data centre revenue had scaled 13x since ChatGPT's launch. Q4 revenue: $68.1 billion. Two days later, OpenAI announced $110 billion in new funding, the largest private funding round in history. Amazon put in $50 billion. SoftBank put in $30 billion. Nvidia put in $30 billion.

| Nvidia Q4 FY2026, February 25 | |

|---|---|

| Total quarterly revenue | $68.1B (+73% YoY) |

| Data centre revenue | $62B |

| Supply commitments (Q4 vs Q3) | $95.2B vs $50.3B |

| Next quarter guidance | $78B |

| OpenAI $110B raise, February 27 | |

|---|---|

| Valuation post-money | $730B |

| Amazon | $50B |

| SoftBank | $30B |

| Nvidia | $30B |

| Weekly active users at close | 900 million |

| Infrastructure committed | 3 GW inference + 2 GW Vera Rubin training |

The company that buys the compute, the company that makes the chips, and the company that funds the build: all in the same round, committed to the same outcome. The market began rotating back into software. Not all software, but companies already on the right side of the transition. Usage-based pricing. AI-native applications. Revenue tied to tokens consumed, not seats occupied.

The foundation model layer is consolidating fast. OpenAI at $730 billion, Anthropic at $380 billion. The capital required to train at frontier scale is now beyond the reach of all but a handful of organisations globally. Pick API providers with staying power, build abstraction layers that let you switch, and stop treating the model layer as where your differentiation lives. GIC already made that call.

GIC and QIA were not alone in making it. Every major economy answered the same question in Q1: is AI infrastructure, or is it software? They all said infrastructure. The mechanisms were different.

| How each economy classified AI, Q1 2026 | Mechanism | Q1 signal |

|---|---|---|

| Singapore / Qatar | Sovereign wealth fund investing in a foundation model provider | GIC + QIA lead Anthropic $30B Series G |

| Europe | State-backed banks extending utility debt to a domestic model provider | BNP Paribas, Crédit Agricole, HSBC lend $830M to Mistral as infrastructure debt |

| China | State-directed domestic production: compute access, national deployment, state procurement | DeepSeek on Huawei chips; Qwen at 58M daily sessions across regional enterprise |

| United States | Private hyperscaler capex at infrastructure scale | $110B OpenAI raise; $95B Nvidia supply commitments; $300B Oracle data centre build |

The instruments are different. The conclusion is the same.

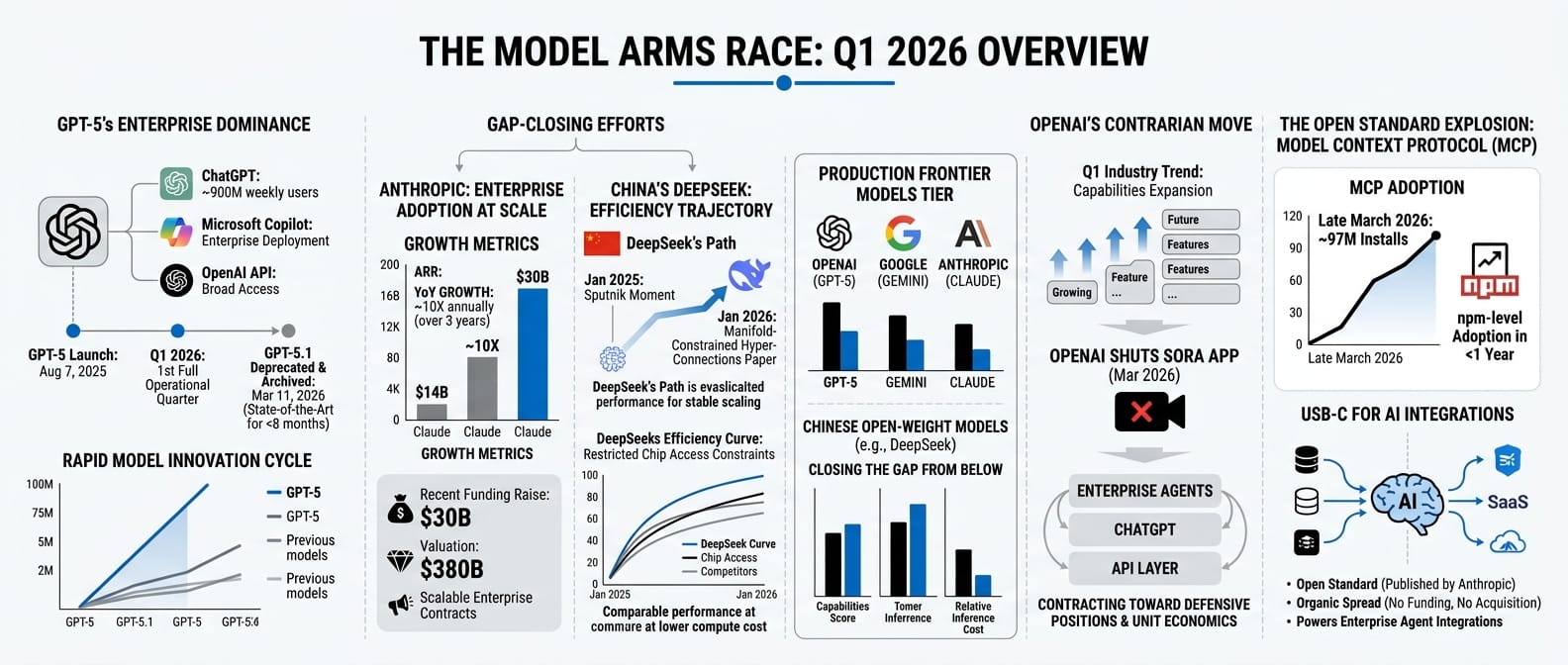

The model arms race: what's running in Q1

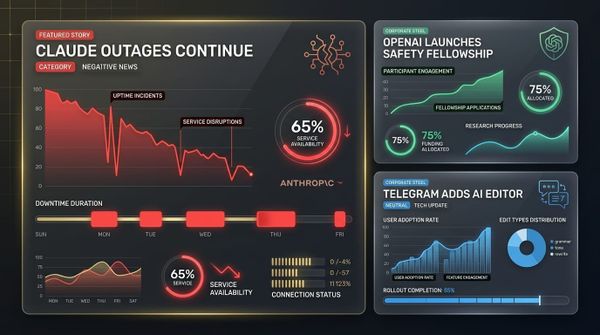

GPT-5 launched on August 7, 2025. Q1 2026 is the first full quarter where the most capable OpenAI model is operational across enterprises globally. It is not a research preview. It powers ChatGPT at 900 million weekly users, runs inside Microsoft Copilot, and is available via API to anyone building on OpenAI's stack. By March 11, GPT-5.1 was deprecated and archived. It had been state-of-the-art fewer than eight months earlier.

The race during Q1 was not about who released the most impressive new model. It was about who was closing the gap on frontier capability while keeping inference costs low enough to run at enterprise scale.

From the US: Anthropic's $30 billion raise at $380 billion was not just a financing event. It was a signal that Claude had reached the point where enterprise adoption at scale is real and measurable. The $14 billion ARR growing 10x annually for three years is not a projection. It is the compound of production contracts, not pilots.

From China: DeepSeek published a research paper in January 2026 introducing Manifold-Constrained Hyper-Connections, a training method enabling stable scaling of large language models with richer internal communication while controlling compute cost. The paper arrived fourteen months after DeepSeek's January 2025 Sputnik moment and demonstrated the same trajectory: the efficiency curve was still moving, and it was moving under the constraint of restricted chip access.

By Q1 2026, the frontier model tier has three credible players running in production at scale (OpenAI, Anthropic, and Google), with Chinese open-weight models closing the gap from below. The consolidation the Anthropic raise foreshadowed is happening faster than most practitioners expected at the start of the year.

One product decision ran against the grain of the expansion announcements. In late March, OpenAI shut down the Sora video generation app. Not paused, not rebranded. Removed. Two months after Sora launched publicly, it was discontinued. In a quarter where every AI company was announcing new capabilities and expanding into new categories, OpenAI chose to narrow. What remained: enterprise agents, ChatGPT, the API layer. The video generation narrative that dominated late 2025 headlines was dropped without explanation. The implication is a competitive surface contracting toward the categories where OpenAI has a defensible position, and away from the ones where the unit economics were unresolved.

One infrastructure shift happened without a funding round or a product announcement. By late March, the Model Context Protocol had crossed 97 million installs. MCP is an open standard that lets AI models connect to external tools and data sources: the equivalent of USB-C for AI integrations. Anthropic published the spec. Nobody funded it, nobody acquired it. It spread. 97 million installs means npm-level adoption in under a year. The agent integrations being built in enterprise now (CRM connectors, internal knowledge base hooks, workflow triggers) are increasingly built to a single open standard rather than to vendor-specific APIs. When a protocol reaches that scale, the evaluation question changes. You are no longer choosing a vendor. You are building on infrastructure. Q1 2026 was the quarter that shift became visible.

The vibe coding floor: what $2 billion in developer ARR means

If your organisation has not standardised its AI developer tooling, your competitors who have are shipping faster. This is no longer a productivity experiment. It is a competitive baseline. Q1 2026 put a number on it.

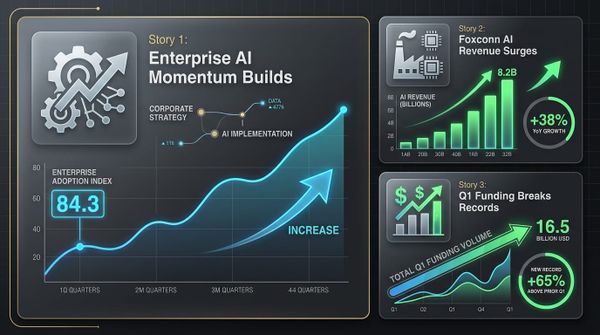

In February, Cursor crossed $2 billion in annualised recurring revenue. It had been at $1 billion in November 2025. It doubled in three months. That is the fastest ARR growth in SaaS history. Approximately 60% of that revenue now comes from large corporate buyers: enterprises with procurement processes, security reviews, and deployment policies, not developers on personal subscriptions.

What Cursor sells is the ability to write software faster by keeping an AI model in context alongside your editor. The product launched two years ago as a curiosity for AI pair programming. It is now enterprise infrastructure. You do not pay per developer seat in the traditional sense. You pay for the compute behind the AI model embedded in every developer's workflow. Every developer using it is generating code at a fraction of the time traditional development requires. The enterprise software built in 2026 is built faster, by smaller teams, with AI in the loop at every step.

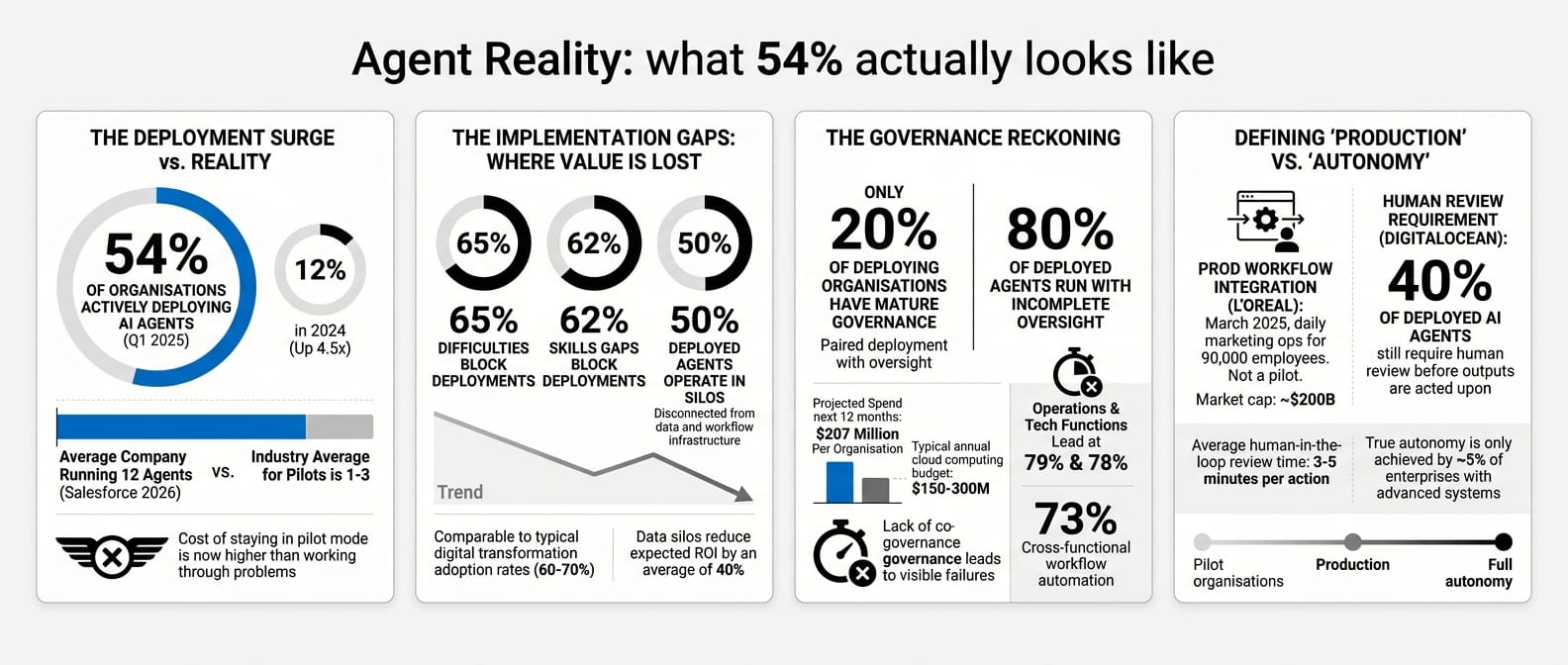

The agent reality: what 54% actually looks like

The cost of staying in pilot mode has become higher than the cost of working through the problems. That is the real story behind Q1's agent deployment numbers. Not the headline figures, but what drove organisations to finally move.

On March 31, KPMG published its Q1 AI Pulse: 54% of organisations are actively deploying AI agents, up from 12% in 2024. Read that carefully. "Actively deploying" is not "running in production with measurable business impact." KPMG's own data shows 50% of deployed agents operate in silos, disconnected from the data and workflow infrastructure they need to compound value. Skills gaps block 62% of deployments. Scaling difficulties block 65%.

The Salesforce 2026 report adds its own numbers: 83% of organisations report most or all teams have adopted agents. The average company is running 12. Projected spend over the next 12 months: $207 million per organisation. Operations and technology functions lead at 79% and 78%. Cross-functional workflow automation is running in 73% of deploying organisations.

The harder truth: only 20% of deploying organisations have mature governance over their agents. Eighty percent of deployed agents are running with incomplete oversight. This will produce visible failures in Q2. The companies that deployed fast without governance scaffolding will have a reckoning. The ones that paired deployment with oversight will compound.

Two data points bracket the deployment reality. On the adoption side: L'Oreal integrated generative AI into its daily marketing operations in March: not a pilot, not a proof of concept, but daily production workflows for a company with 90,000 employees. On the readiness side: a DigitalOcean survey found that 40% of deployed AI agents still require human review before their outputs are acted upon. These two figures are not in tension. They describe the same organisations at different stages of the same transition. Production deployment is not the same as full autonomy, and most enterprises running agents in Q1 had not yet closed that gap.

The new map: five bets on five different futures

While the SaaSpocalypse dominated headlines, a smaller set of deals was running in parallel. These were not the headline rounds. They were more specific, and more useful for understanding which part of the stack serious money had decided was defensible.

On February 24, two engineers named Reiner Pope and Mike Gunter announced a $500 million Series B for a company called MatX. Pope and Gunter designed the Tensor Processing Units inside Google: the chips that power Search, Translate, and YouTube at scale. They were now building hardware to compete with Nvidia for inference. The lead investor was Jane Street, one of the world's most technically sophisticated quantitative trading firms.

The bet was not that Nvidia was going to fail. It was that the inference market at scale was large enough, and Nvidia's margins wide enough, that a specialised alternative at lower cost-per-token was inevitable.

| MatX Series B, February 24 | |

|---|---|

| Amount | $500M |

| Founders | Reiner Pope, Mike Gunter (designed Google TPUs) |

| Lead investors | Jane Street, Situational Awareness |

| Thesis | Custom inference chips below Nvidia's price per token |

Three weeks later, Nvidia itself invested $2 billion into Nebius, an AI cloud infrastructure provider building data centres for enterprises that did not want to go through the major hyperscalers. Nvidia's investment was roughly 7% of Nebius's market cap. This was Nvidia expanding vertically through capital, not product: ensuring there was an alternative distribution channel for its chips that it could influence directly.

On March 25, Reflection AI announced $2.5 billion at a $25 billion valuation. The founders came from Google DeepMind. The company was building open-weight large language models. The primary investor was Nvidia. Open-weight models run on Nvidia chips. Closed models run on Nvidia chips. The investment was agnostic about the winner of the open-versus-closed debate, provided the winner ran on Nvidia hardware.

| Reflection AI $2.5B, March 25 | |

|---|---|

| Valuation | $25B (from $8B six months prior) |

| Primary investor | Nvidia |

| Thesis | Open-weight frontier models; "DeepSeek of the West" |

| Strategic significance | Nvidia hedging open vs. closed model outcome |

On March 29, Harvey announced $200 million at an $11 billion valuation. Harvey builds AI for lawyers: contract review, regulatory research, due diligence, litigation analysis. The lead investors were GIC and Sequoia. GIC (which had led Anthropic's $30 billion round six weeks earlier) was now backing a company that runs on top of Anthropic's models.

Harvey has built something that a foundation model provider cannot replicate: the legal training data, the workflow integrations, the regulatory knowledge at the task level, the client relationships. A foundation model company that tried to build what Harvey has built would be entering a regulated professional services market. The vertical moat was the product.

| Harvey $200M, March 29 | |

|---|---|

| Valuation | $11B (from $8B in December) |

| Lead investors | GIC (Singapore SWF), Sequoia |

| What it builds | AI for legal work: contracts, due diligence, litigation |

| Why the moat holds | Foundation models can't replicate regulated-domain training data and client trust |

GIC backed the model layer with Anthropic and the application layer with Harvey. Both bets can be right simultaneously. The closer your application gets to a specific regulated domain, an existing professional relationship, or a proprietary data asset, the harder it becomes for a foundation model provider to replace you by releasing a better base model.

One day later, Sycamore raised $65 million in a seed round. The CEO was Sri Viswanath, formerly CTO of Atlassian. The angels included the CEO of Databricks and a former Chief Scientist of OpenAI. Sycamore was building an "agent OS for enterprise": the orchestration and governance layer that sits between foundation models and production business workflows.

| Sycamore $65M seed, March 30 | |

|---|---|

| CEO | Sri Viswanath (ex-CTO, Atlassian) |

| Investors | Coatue, Lightspeed |

| Notable angels | Databricks CEO; former OpenAI Chief Scientist |

| Thesis | Agent orchestration OS for enterprise production workflows |

If you are building agents right now, you are also building your own orchestration layer from scratch: state management, retry logic, tool routing, human-in-the-loop approval flows. That work is expensive, fragile, and not your core product. Sycamore's thesis is that a standard layer will emerge, the way application servers emerged in the 1990s and cloud orchestration in the 2010s. The KPMG data on agent deployment says the demand is real. Q1 2026 was the moment investors decided it was worth backing someone to build it before the winner became obvious.

Taken together, these five deals described the same thesis from five different angles: the chip layer has a challenger (but Nvidia is hedging it itself), the vertical application layer is where durable moats get built, and the orchestration layer between models and enterprise workflows is the next unsolved category.

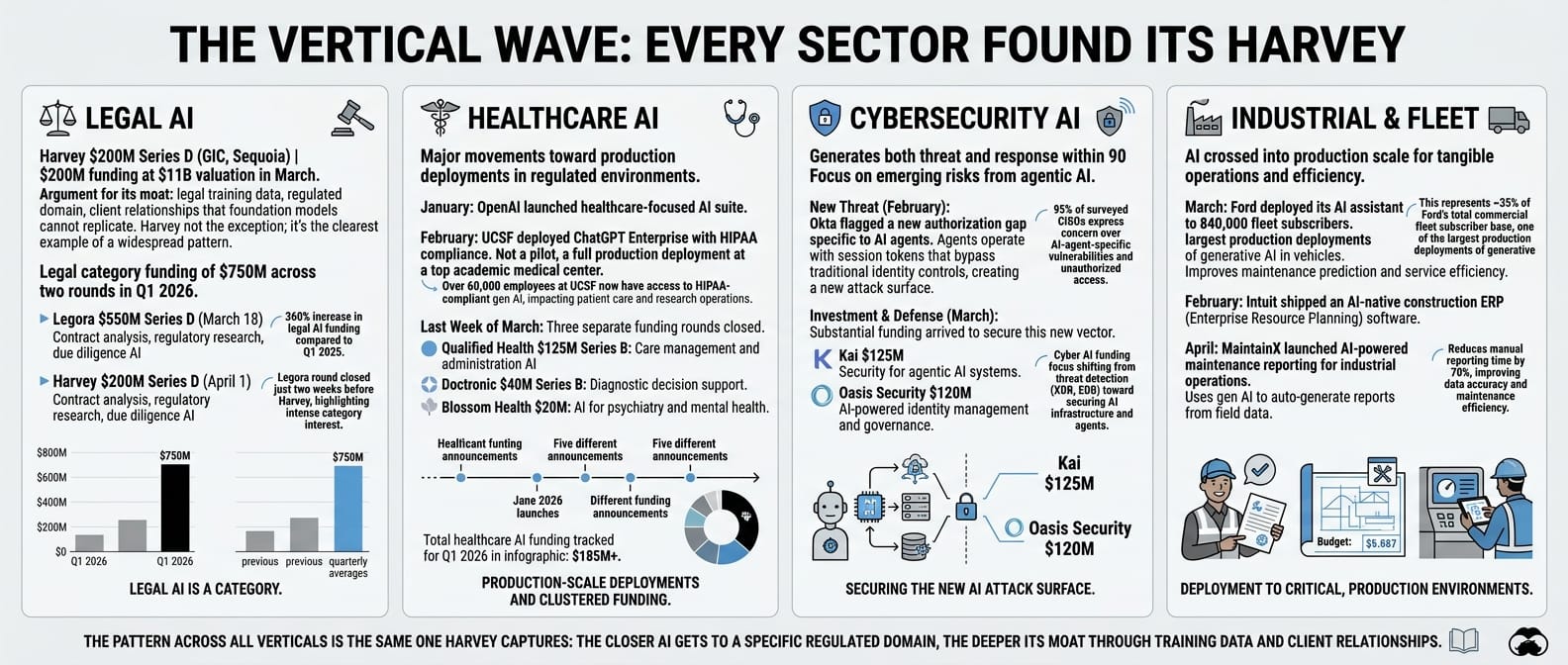

The vertical wave: every sector found its Harvey

Harvey raised $200 million at an $11 billion valuation in March. The argument for its moat is in the section above: legal training data, regulated domain, client relationships that a foundation model cannot replicate. What Q1 showed is that Harvey was not the exception. It was the clearest example of a pattern running across every major enterprise vertical simultaneously.

Legal alone saw $750 million in funding across two rounds in one quarter. Legora raised $550 million in a Series D on March 18, two weeks before Harvey closed. Both companies build AI for legal work: contract analysis, regulatory research, due diligence. Both raised nine-figure rounds in the same 90 days. Legal AI is not a single bet. It is a category.

Healthcare clustered the same way. OpenAI launched a healthcare-focused AI suite in January. UCSF deployed ChatGPT Enterprise with HIPAA compliance in February: not a pilot, a production deployment at one of the top academic medical centres in the world. Three separate funding rounds closed in the final week of March: Doctronic ($40M Series B), Qualified Health ($125M Series B), and Blossom Health ($20M for AI psychiatry). Five healthcare AI events in one quarter.

Cybersecurity generated both the threat and the response in the same 90 days. Okta flagged a new authorisation gap specific to AI agents in February. Agents were operating with session tokens that bypass traditional identity controls. By March, the funding had arrived: Kai raised $125 million for agentic AI cybersecurity, Oasis Security raised $120 million for AI identity management. The attack surface created by Q1 agent deployment and the investment to close it arrived in the same quarter.

Industrial and fleet AI crossed into production scale. Ford deployed its AI assistant to 840,000 fleet subscribers in March. Intuit shipped an AI-native construction ERP in February. MaintainX launched AI-powered maintenance reporting for industrial operations in April.

| Vertical AI wave, Q1 2026 | Event | Amount |

|---|---|---|

| Legal | Harvey $200M Series D (GIC, Sequoia) | $200M at $11B |

| Legal | Legora Series D | $550M |

| Healthcare | OpenAI healthcare AI suite launch | — |

| Healthcare | UCSF ChatGPT Enterprise (HIPAA, production) | — |

| Healthcare | Doctronic Series B | $40M |

| Healthcare | Qualified Health Series B | $125M |

| Healthcare | Blossom Health (AI psychiatry) | $20M |

| Cybersecurity | Okta flags AI agent auth gap | — |

| Cybersecurity | Kai (agentic AI security) | $125M |

| Cybersecurity | Oasis Security (AI identity) | $120M |

| Industrial / fleet | Ford AI assistant: 840K fleet subscribers | — |

| Industrial / fleet | Intuit AI-native construction ERP | — |

| Industrial / fleet | MaintainX AI maintenance reporting | — |

The pattern across all of them is the same one the GIC/Harvey thesis captures: the closer AI gets to a specific regulated domain, a body of proprietary operational data, or an existing professional relationship, the harder it becomes for a foundation model provider to compete by releasing a better base model. Q1 did not produce one Harvey. It produced a Harvey in every major vertical simultaneously.

The storm: when macro arrives on top of structural

The important distinction up front: the two forces that drove tech stocks down in Q1 moved in the same direction but predict opposite outcomes. The macro shock from oil and tariffs is cyclical. If the conflict resolves, energy prices fall, inflation expectations come down, growth stocks recover the geopolitical discount. The structural repricing of the seat-based model does not reverse with oil prices. When the macro noise clears, the structural signal will still be there.

The macro shock itself: a Middle East conflict that started at the end of February sent oil from $65 to $115 by March 30. Brent crude rose 63% in March alone. That is the largest monthly crude gain in four decades. The Strait of Hormuz, through which roughly 20% of global oil supply passes, was under active threat for most of the month. AI infrastructure has no direct exposure to Middle East oil. Data centres run on electricity, not crude. The hyperscaler capex commitments were already signed. But the Iran shock hit energy prices, rotation dynamics, and sentiment, compressing growth stocks that were already under structural pressure.

| Oil price trajectory, Q1 2026 | Price |

|---|---|

| January (start of quarter) | ~$65/barrel |

| March 8 | $100/barrel (first time since 2022) |

| Mid-March | $110/barrel |

| March 30 | $115/barrel |

| Brent crude gain in March alone | +63% (largest in 40 years) |

| Bloomberg Commodity Index, Q1 | +24.4% |

On top of the oil shock, a second macro disruption: the US tariff escalation introduced a flat 10% import tariff after the Supreme Court struck down the earlier reciprocal tariff structure. Goldman Sachs revised its 2026 PCE inflation forecast to 3.1%, JPMorgan to 3.4%, the OECD to 4% for G20 inflation. Growth stocks took a cyclical compression on top of the structural repricing already underway.

| Market rotation, Q1 2026 | Return |

|---|---|

| Bloomberg Commodity Index | +24.4% |

| US value stocks | +1.3% |

| MSCI Europe ex-UK | -2.3% |

| S&P 500 | -4.3% |

| US growth stocks | -8.4% |

| US tech (February 1-27 alone) | -23% |

| XLE (energy ETF) YTD inflows | $12.3B (vs $8.3B outflows in 2025) |

| Short-duration bond ETF inflows | $33.3B new money |

Japan and the UK both finished positive. Capital was not just rotating into energy: it was rotating internationally, away from US growth and toward value markets, dividend-paying sectors, and non-dollar assets.

Defense stocks initially surged. Lockheed Martin and RTX both moved up sharply, then gave back most of the gains. Reuters reported on April 2: "US defense stocks have declined even as the Iran war drags on, indicating that the typical 'buy-on-conflict' trade had largely peaked." The war had not produced the sustained defense procurement cycle the initial moves had priced in.

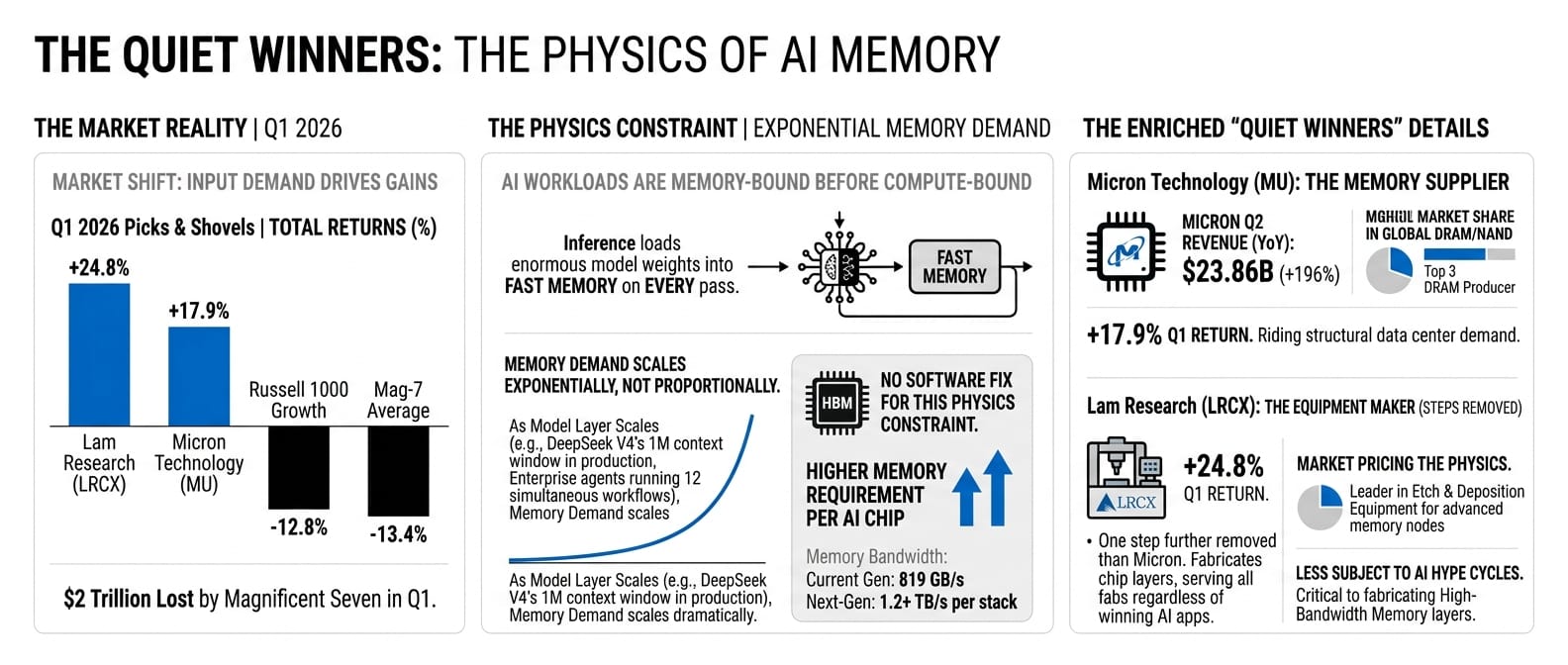

The quiet winners: the physics don't lie

The energy rotation and the AI infrastructure build both had the same underlying cause: input demand. Energy companies rode the macro spike plus structural data centre demand. But one category had no exposure to oil prices at all and still outperformed everything: memory chips.

The Magnificent Seven lost $2 trillion in Q1. Memory chip makers gained.

| Picks and shovels, Q1 2026 | Return |

|---|---|

| Micron Technology (MU) | +17.9% |

| Lam Research (LRCX) | +24.8% |

| Micron Q2 revenue (YoY) | $23.86B (+196%) |

| Mag-7 average | -13.4% |

| Russell 1000 Growth | -12.8% |

Every AI workload is memory-bound before it is compute-bound. Inference requires loading enormous model weights into fast memory on every pass. There is no software fix for this. High-bandwidth memory is the physics constraint. As the model layer scales (DeepSeek V4's 1M context window in production, enterprise agents running 12 simultaneous workflows per organisation), memory demand scales exponentially, not proportionally.

Lam Research makes the equipment used to manufacture the layers inside memory chips. It is one step further removed from the AI narrative than Micron. It is also, for precisely that reason, less subject to AI hype cycles. Lam's customers are the chip fabs, and the chip fabs are building regardless of which application layer wins. The +24.8% return in Q1 was the market pricing the physics.

The eastern challenge: two surprises from China

The first Chinese surprise of Q1 arrived in January, in a research paper. DeepSeek published the details of a training technique called Manifold-Constrained Hyper-Connections: a method enabling stable scaling of large language models with richer internal communication at lower compute cost. Analysts called it a "striking breakthrough." It arrived fourteen months after DeepSeek's January 2025 moment and demonstrated the same trajectory: the efficiency curve was still moving, and it was moving under the constraint of restricted chip access.

The second surprise landed on February 17: Lunar New Year. DeepSeek published V4.

V4 was not an incremental update. The company described it as a total architectural overhaul, internally codenamed MODEL1. It integrated Engram conditional memory technology, enabling stable retrieval from contexts exceeding one million tokens. Internal benchmarks (DeepSeek's own, not independently verified) showed V4 outperforming Claude and GPT-5 on long-context code generation. The model was optimised to run on Chinese AI chips from Huawei and Cambricon, not just Nvidia hardware. The timing was deliberate: Lunar New Year is when Chinese internet usage peaks. Releasing V4 into that window sent a message about production readiness, not just benchmark performance.

| DeepSeek V4 (MODEL1), February 17 | |

|---|---|

| Architecture | Total overhaul; Engram conditional memory tech |

| Context window | 1M+ tokens (stable retrieval) |

| Reported performance | Outperforms Claude and GPT-5 on long-context code generation (self-reported) |

| Hardware | Runs on Huawei and Cambricon chips |

| Licensing | Expected open-source under permissive license |

A month later, the third Chinese signal arrived via Alibaba.

Alibaba reported its Q3 FY2026 results on March 19. Net income was down 67% year-on-year. EPS missed consensus by 38.8%. Revenue missed estimates. On any traditional valuation metric, the results were bad.

The stock gained 19% in a single day, adding $50 billion in market cap. You pull up the Alibaba investor deck. Net income down 67%. Qwen at 58 million daily active users. You know which number the market decided mattered.

| Alibaba Q3 FY2026, March 19 | |

|---|---|

| EPS (adjusted) vs consensus | $1.01 vs $1.65 (-38.8%) |

| Net income YoY | -67% |

| Qwen daily active users | 58M (from 7M pre-Lunar New Year) |

| Standalone AI unit | Announced on this call |

| Stock on announcement | +19% (+$50B market cap) |

The market had decided what Alibaba was: the infrastructure and model layer for AI adoption across Asia, not an e-commerce company with AI features. At 58 million daily sessions, Qwen was not a Chinese alternative to Western models. It was the primary production option for workloads across the region, on latency, cost, and data residency.

The Western AI industry's answer to Chinese efficiency was consistent throughout Q1: announce more spending. The $110 billion OpenAI raise, the $95 billion Nvidia supply commitments, the $300 billion Oracle build. Whether the compute moat thesis holds against a cost-efficiency thesis will not be visible in Q1 numbers. It will be visible in 2027.

For you working in Southeast Asia, the calculus has already shifted. At 58 million daily sessions, the integration tooling around Qwen is real: fine-tuning services, enterprise support, deployment partners. For workloads where data residency requirements are strict (Singapore PDPA, Indonesia PDPL, Malaysia PDPA) and where latency to US infrastructure is a genuine constraint, the DeepSeek and Qwen options offer a different risk profile than betting entirely on Western APIs. GIC led Anthropic's round and simultaneously led Harvey's round six weeks later. Both are Western bets. Neither of those decisions prevents you from running Qwen for the workloads where regional infrastructure makes more sense. The sovereign capital is not choosing one model for everything. Neither should you.

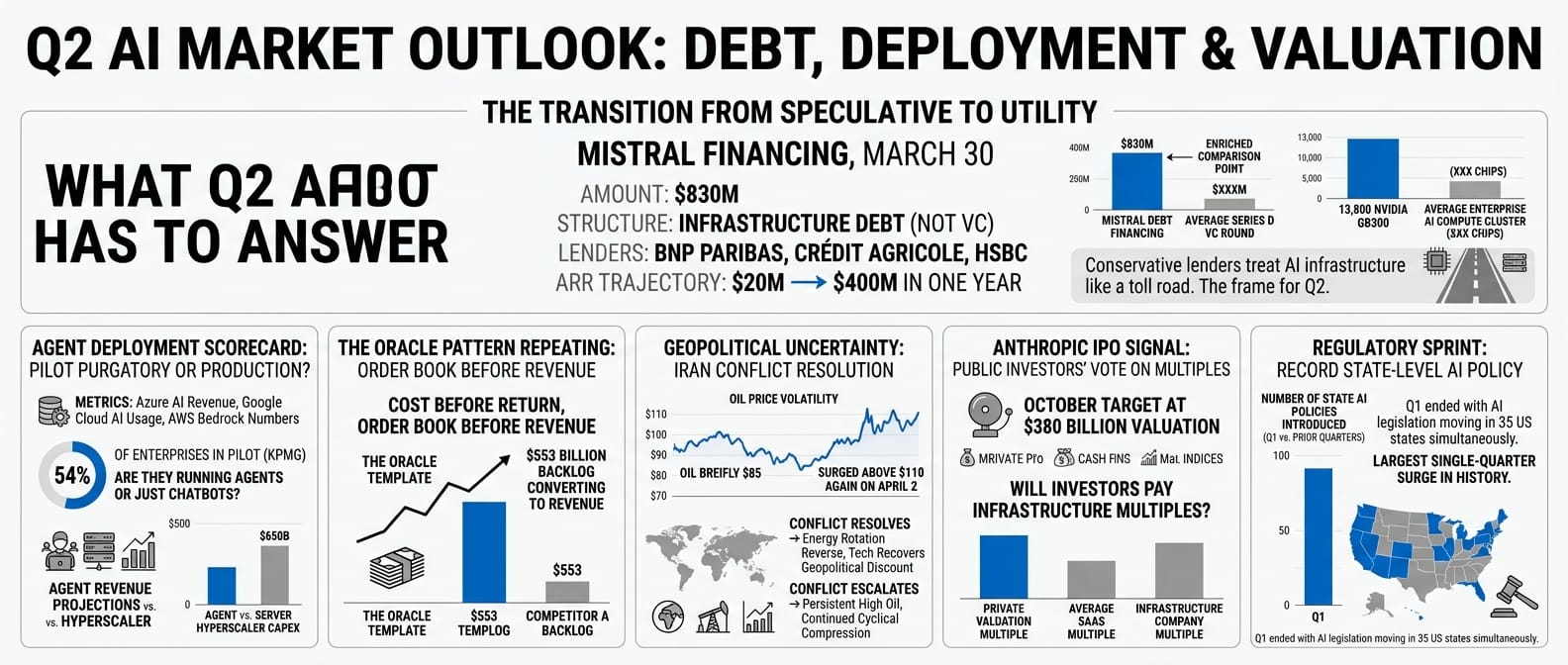

What Q2 has to answer

On the last trading day of Q1, Mistral announced $830 million in debt financing from BNP Paribas, Crédit Agricole, and HSBC. Not venture capital, not a Series D. Infrastructure debt. The same mechanism used to fund power plants, toll roads, and fibre networks. The banks were lending against structural demand for European-domiciled AI compute, driven by the EU AI Act, GDPR, and data residency requirements that many enterprise workloads cannot legally satisfy on US infrastructure. Mistral's ARR had grown from $20 million to $400 million in twelve months. Conservative lenders looked at that trajectory and called it a utility.

| Mistral debt financing, March 30 | |

|---|---|

| Amount | $830M |

| Structure | Infrastructure debt (not VC) |

| Lenders | BNP Paribas, Crédit Agricole, HSBC |

| Chips ordered | 13,800 Nvidia GB300 |

| ARR trajectory | $20M → $400M in one year |

When conservative lenders start treating AI infrastructure like a toll road, the transition is no longer speculative. That is the frame for Q2.

The seat-based model is being repriced. The outcome-based model is forming. Six variables will determine the direction.

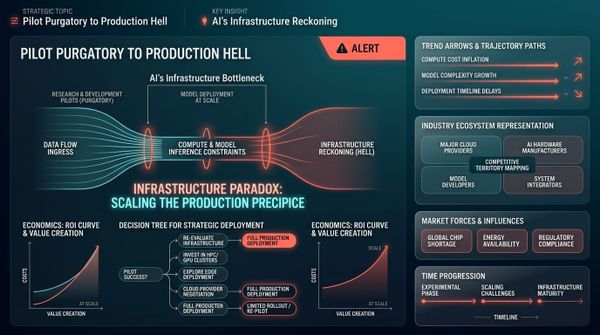

The agent deployment scorecard. Azure AI revenue, Google Cloud AI usage, and AWS Bedrock numbers will show whether the 54% KPMG deployment number is producing revenue at the infrastructure layer. The $650 billion in hyperscaler capex requires pilot purgatory to end. If enterprise agents are running in production and consuming tokens at scale, the Q1 infrastructure build looks conservative. If the 54% is mostly chatbots and document summarisers, the forward order books look premature.

The Oracle pattern repeating. Several companies made the same bet Oracle made: cost before return, order book before revenue. Q2 will show whether the return starts arriving. Oracle's $553 billion backlog converting to revenue is the template. If it does, order book becomes the metric, not current revenue.

The Iran resolution or escalation. Trump's late March statements about "very good and productive conversations" brought oil briefly back to $85, but it surged above $110 again on April 2 after a speech with "no off-ramp." If the conflict resolves, the energy rotation reverses and tech recovers some of the geopolitical discount. The structural repricing does not reverse with oil prices. But the cyclical compression does reverse with resolution.

The Anthropic IPO signal. The October target at a $380 billion valuation is the first real test of whether public investors will pay infrastructure multiples for a foundation model company. The same reclassification GIC made in February, now put to a market vote. If it prices well, private AI valuations hold. If it disappoints, the correction hits private markets fast.

The regulatory sprint. Q1 ended with AI legislation moving in 35 US states simultaneously. That is the largest single-quarter surge in state-level AI policy in history. A Perplexity AI class-action lawsuit was filed over data sharing practices with Meta and Google. These are early signals of a Q2 compliance environment that Q1 did not require. Which AI providers have defensible data handling, what state regulations require in customer-facing deployments, where enterprise liability sits when an agent makes an error: none of these were operational questions in Q1. They will be in Q2. The organisations that built agent governance infrastructure while deploying will have the advantage when the compliance decisions land.

And a sixth signal, quieter but telling: Micron and Lam Research will show whether the memory thesis is structural or cyclical. If they hold their Q1 gains through Q2, the demand is real and compounding. If they give them back, Q1 was rotation, not signal.

The two stories that defined Q1 (the structural repricing of the AI business model and the cyclical shock from war and tariffs) both hit tech stocks in the same direction. But they predict opposite outcomes. The cyclical shock was noise on top of a real signal. The signal is that the software layer is being repriced, the outcome-based model is replacing it, and the infrastructure layer is being built to run both.

You are building in the middle of that transition. Q2 starts showing who is on which side of the line.

Q1 2026 reference: key events

| Event | Date | Market reaction |

|---|---|---|

| Microsoft Q2 FY2026, $81.3B revenue, record results | Jan 28 | MSFT -10% (-$357B); -23% for Q1 |

| Anthropic $30B Series G, GIC and QIA lead | Feb 12 | Private; $183B → $380B valuation |

| DeepSeek V4 (MODEL1) launch, Lunar New Year | Feb 17 | Chinese AI capability signal; open-weight |

| AI Scare Trade, Altruist "Hazel" launches | Feb 18 | SCHW -7%, RJF -8%, LPLA -8% |

| SaaSpocalypse peaks, NOW -20%, CRWD -9.4% | Feb 23 | ~$1T enterprise software cap erased |

| MatX $500M Series B, ex-Google TPU engineers | Feb 24 | Nvidia challenger thesis; Jane Street lead |

| Nvidia Q4 FY2026, $68B revenue, +73% YoY | Feb 25 | NVDA -8% Q1 (priced in) |

| OpenAI $110B raise, Amazon + Nvidia + SoftBank | Feb 27 | Rotation back into AI software |

| Middle East conflict escalates | Feb 28 | Oil begins run from $65 to $115 |

| Cursor reaches $2B ARR, enterprise shift | Feb 2026 | Fastest-growing SaaS company on record |

| Iran conflict, oil crosses $100/barrel | Mar 8 | Dow futures -1,000 pts; XLE inflows surge |

| Oracle Q3 FY2026, $553B forward order book | Mar 10 | Sharp recovery from -30% |

| Nvidia $2B into Nebius, AI cloud infra | Mar 11 | Nvidia builds alternative distribution |

| Alibaba, Qwen 58M DAU, standalone AI unit | Mar 19 | +19% in a single day (+$50B cap) |

| Reflection AI $2.5B, Nvidia-backed open models | Mar 25 | Nvidia hedges open vs. closed outcome |

| Harvey $200M at $11B, GIC + Sequoia | Mar 29 | Vertical AI moat in legal; GIC doubled down |

| Oil hits $115/barrel | Mar 30 | Energy ETFs +$12.3B inflows YTD |

| Sycamore $65M seed, agent OS for enterprise | Mar 30 | Middleware layer emerges as category |

| Mistral $830M debt from European banks | Mar 30 | Infrastructure thesis confirmed |

| KPMG Q1 AI Pulse, 54% actively deploying agents | Mar 31 | Pilot purgatory ending |

| Magnificent Seven combined | Q1 2026 | -$2 trillion market cap |

| S&P 500 | Q1 2026 | -4.3% |

| US growth stocks | Q1 2026 | -8.4% |

| US value stocks | Q1 2026 | +1.3% |

| Bloomberg Commodity Index | Q1 2026 | +24.4% |

| Brent crude in March alone | March 2026 | +63% (largest monthly gain in 40 years) |

Sources

Earnings and financials

- Microsoft Q2 FY2026 press release (January 28, 2026)

- Microsoft investor relations, Q2 FY2026

- Nvidia Q4 FY2026 earnings press release (February 25, 2026)

- Oracle Q3 FY2026 earnings (March 10, 2026)

- Alibaba Q3 FY2026 earnings (March 19, 2026)

Funding rounds

- Anthropic Series G — $30B at $380B (February 12, 2026)

- OpenAI $110B raise (February 27, 2026)

- MatX $500M Series B (February 24, 2026)

- Nvidia $2B Nebius investment (March 11, 2026)

- Reflection AI $2.5B round (March 25, 2026)

- Harvey $200M at $11B (March 29, 2026)

- Sycamore $65M seed (March 30, 2026)

- Mistral $830M debt financing (March 30, 2026)

Reports and surveys

- KPMG Q1 AI Pulse 2026 (March 31, 2026)

- Salesforce "State of AI Agents" 2026

- SaaStr — "Salesforce now has 3 pricing models for Agentforce" (2026)

- GetMonetizely — "The doomed evolution of Salesforce's Agentforce pricing"

Press coverage

- TechCrunch — "Cursor has reportedly surpassed $2B in annualized revenue" (March 2, 2026)

- Fortune — "Cursor CEO Michael Truell on Claude, Anthropic and venture capital" (March 21, 2026)

- Business Insider — "DeepSeek new AI training models: manifold-constrained" (January 2026)

- Markets Financial Content — "The AI Reckoning: why Microsoft's record profits couldn't prevent a $350 billion market rout" (April 2, 2026)

- Reuters — "US defense stocks see no Iran war lift" (April 2, 2026)

Market data

- J.P. Morgan Asset Management Monthly Market Review (April 2026)

- Goldman Sachs, JPMorgan, OECD inflation forecast revisions (March 2026)

- Yahoo Finance, Morningstar, FactSet