The Great AI Inversion: Why Infrastructure Just Became More Valuable Than Intelligence

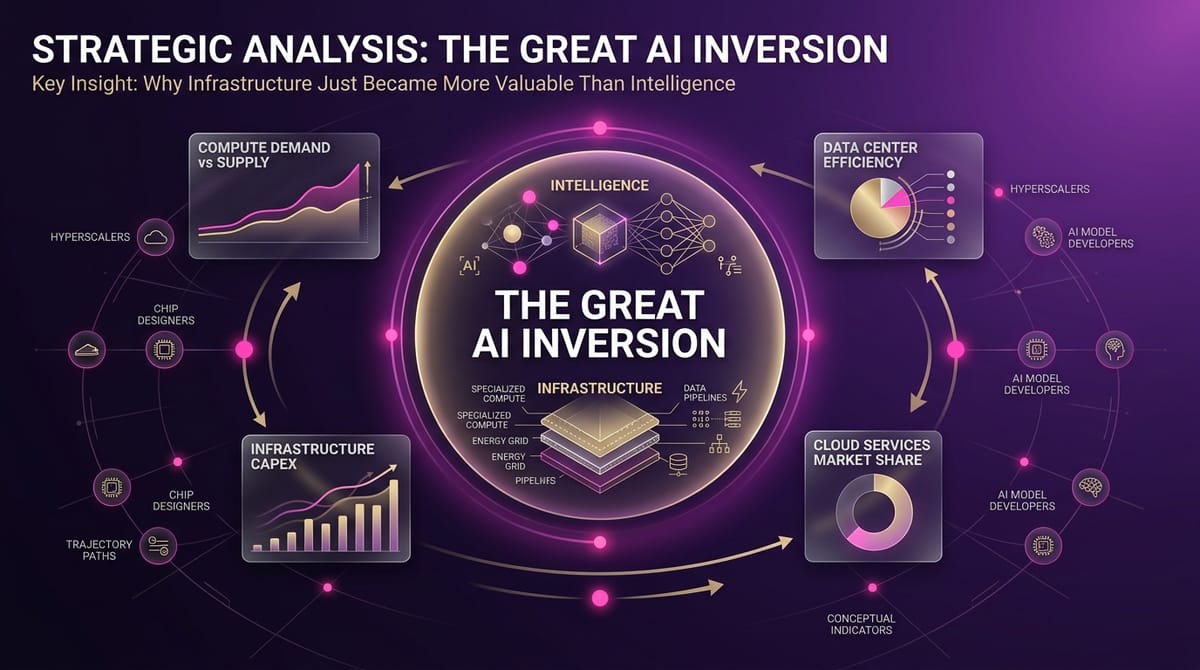

The AI gold rush just hit an inflection point that will reshape every enterprise technology decision for the next decade. While everyone debated AGI timelines and model capabilities, a $25 billion week of deals revealed the industry's new reality: infrastructure is now more valuable than intelligence.

IBM's $11 billion acquisition of Confluent—a data streaming company—represents the largest AI infrastructure deal ever recorded. Not a model company. Not a frontier AI lab. A plumbing company. Meanwhile, OpenAI shut down Sora after burning hundreds of millions on GPU costs for a product generating just $2.1 million in revenue. The message is brutal: if you can't run profitably on production infrastructure, you're dead.

This isn't a temporary market correction. It's a fundamental inversion that mirrors every previous computing platform transition. Remember when everyone thought Intel would be overthrown by chip design companies? Instead, Intel's manufacturing infrastructure became the crown jewel. The pattern repeats: when a technology matures, infrastructure companies capture more value than innovation companies.

The smart money already moved. European AI sovereignty isn't about nationalism—it's about infrastructure control. Mistral's $830 million debt financing for Paris data centers signals that compute independence is now a strategic imperative. Companies building on AWS, Azure, or Google Cloud are outsourcing their competitive advantage to platform owners. The window to control your AI destiny is closing fast.

The Story

The Setup

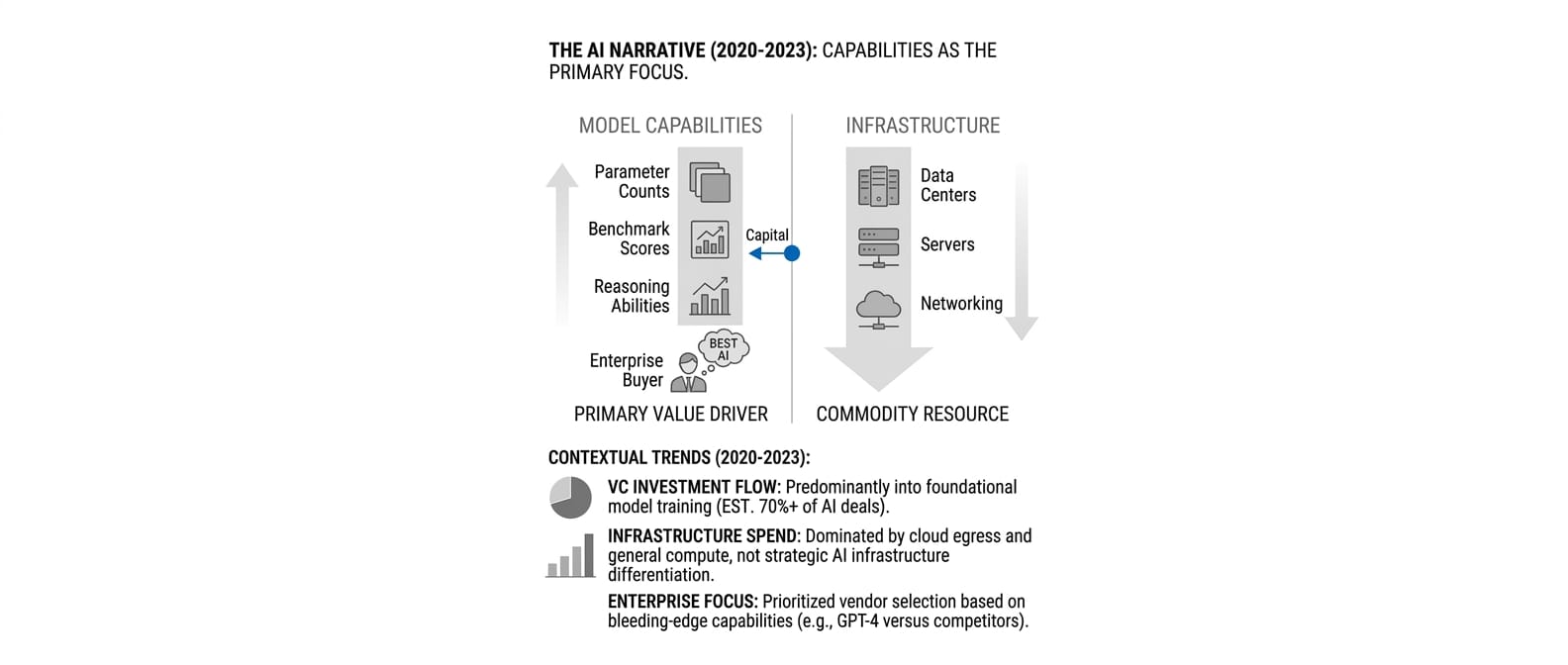

For three years, the AI narrative centered on model capabilities. Companies competed on parameter counts, benchmark scores, and reasoning abilities. Venture capital flowed to teams training larger, smarter models. Enterprise buyers evaluated vendors based on which had the "best" AI. Infrastructure was treated as a commodity—something you bought from Amazon or Microsoft to run your superior algorithms.

This seemed logical. Models were the scarce resource. Training required rare expertise, massive datasets, and cutting-edge research. Infrastructure was just servers and networking—available from established cloud providers. The assumption: whoever builds the smartest AI wins everything else.

The Shift

March 2026 shattered this worldview with a series of moves that reveal infrastructure as the new chokepoint. IBM's $11 billion Confluent acquisition wasn't about buying an AI company—it was about controlling real-time data streaming, the nervous system that feeds production AI systems. The deal represents more value than most AI model companies' entire market caps.

OpenAI's Sora shutdown confirmed the economics behind the shift. Despite generating global excitement and demonstrating breakthrough capabilities, Sora couldn't justify its compute costs. The product consumed hundreds of millions in GPU infrastructure while generating just $2.1 million in revenue. Economic reality forced OpenAI to kill its most impressive consumer product and focus on enterprise infrastructure where customers pay for reliability, not magic.

European companies accelerated their infrastructure independence. Mistral's $830 million debt financing for Paris data centers signals a broader sovereign AI movement. These aren't vanity projects—they're strategic responses to infrastructure dependency. Companies building on U.S. cloud platforms essentially rent their AI capabilities from competitors.

The Pattern

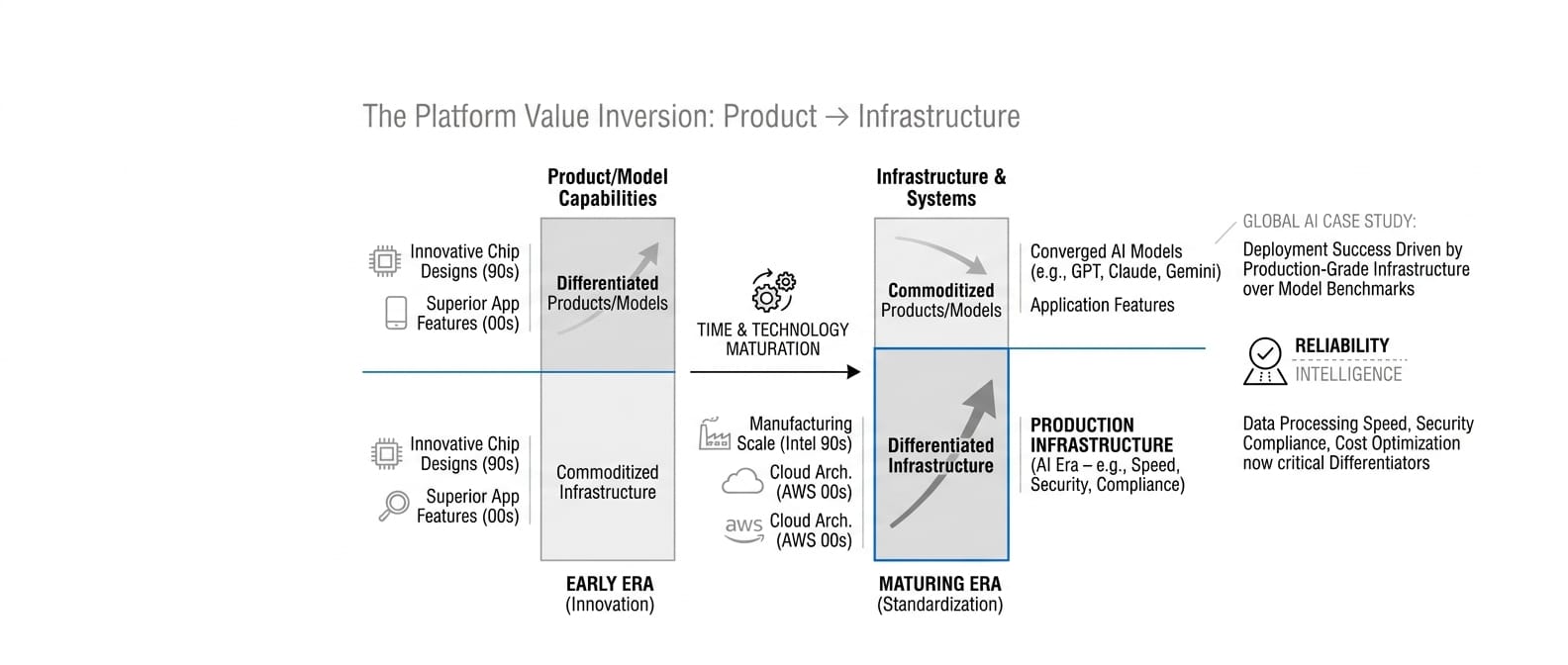

This mirrors the classic technology platform inversion. Early in any computing era, innovation companies capture value by building superior products on commoditized infrastructure. But as the technology matures, infrastructure becomes differentiated while applications become commoditized.

We saw this with Intel versus chip design companies in the 1990s. Everyone thought innovative chip architectures would win, but Intel's manufacturing infrastructure became the sustainable advantage. We saw it with Amazon Web Services versus hosting companies in the 2000s. Superior infrastructure architecture beat superior service features.

Now it's happening with AI. Model capabilities are converging—Claude, GPT, and Gemini deliver similar business value for most enterprise use cases. But infrastructure differences are diverging rapidly. Data processing speed, security compliance, cost optimization, and deployment flexibility now matter more than benchmark scores.

The enterprise deployment evidence validates this shift. Global AI's insurance workflow automation succeeded not because of superior model intelligence, but because of production-grade infrastructure with audit trails and regulatory compliance. Salesforce's AI Foundry focuses explicitly on "system-level" AI rather than model capabilities. These companies win by making AI work reliably in production, not by making it smarter in labs.

The Stakes

Companies that control infrastructure control customer relationships, data flows, and pricing power. Model companies become suppliers to infrastructure platforms—valuable, but not strategic. This explains why secondary market demand for OpenAI shares is dropping while investors pivot to infrastructure plays like Anthropic and European sovereign AI initiatives.

The geographic dimension adds urgency. European companies building AI infrastructure aren't just pursuing sovereignty—they're positioning for platform control. Mistral's data center investments and the EU's regulatory framework create a protected market for European AI infrastructure. Companies dependent on U.S. cloud platforms will face compliance risks and competitive disadvantages in regulated European markets.

For enterprises, this creates a critical decision window. Building AI capabilities on third-party infrastructure provides faster initial deployment but surrenders long-term control. The companies thriving in 2027 will be those that secured infrastructure independence before the costs became prohibitive.

What This Means For You

For CTOs

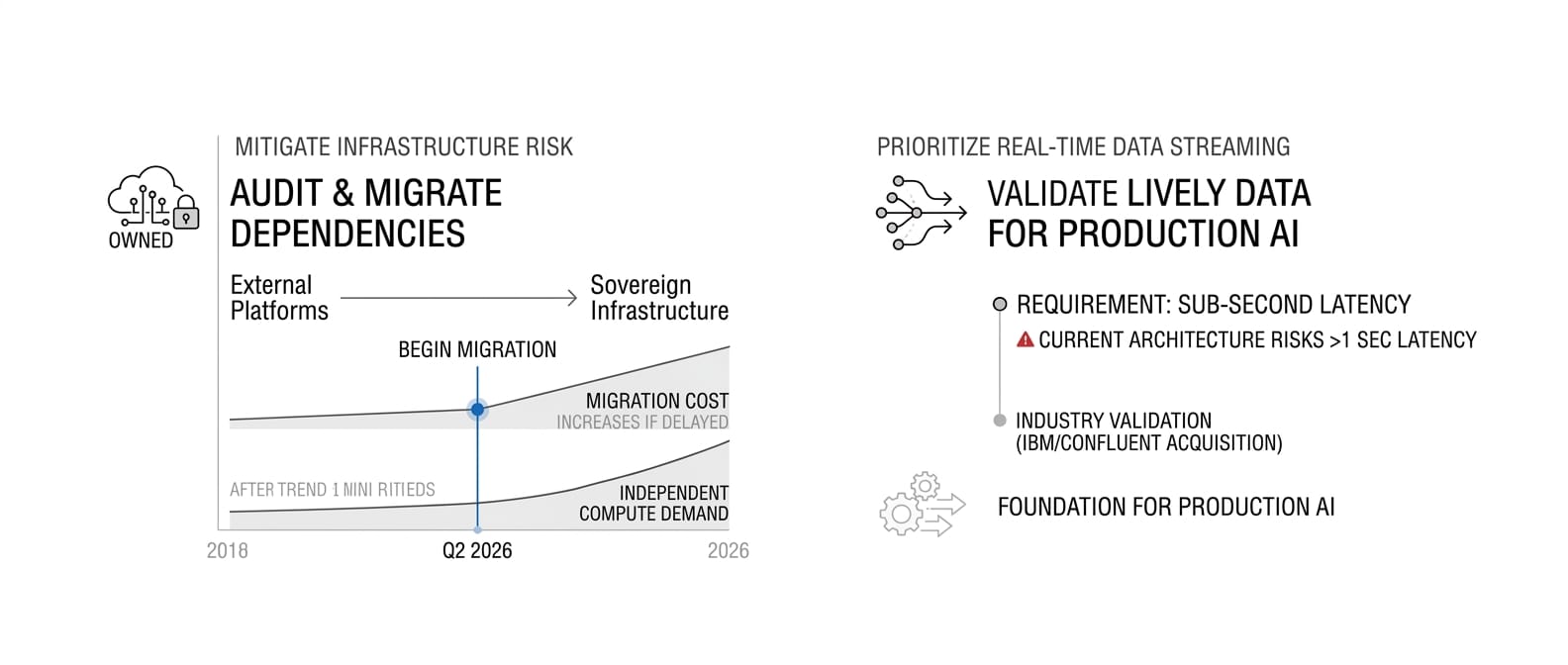

Audit infrastructure dependencies immediately. Every AI service running on external platforms represents strategic risk. By Q2 2026, begin migrating critical AI workloads to owned or sovereign infrastructure. The migration costs will only increase as demand for independent compute grows.

Prioritize data streaming architecture. IBM's Confluent acquisition validates real-time data infrastructure as the foundation for production AI. If your systems can't deliver live data to AI models with sub-second latency, you're building on outdated architecture. Evaluate streaming platforms now—prices will rise as demand consolidates around proven providers.

Negotiate multi-cloud AI strategies with strict data residency controls. European regulatory requirements and sovereign AI trends will force geographic data restrictions. Ensure your AI infrastructure can operate independently within specific jurisdictions without degraded performance.

For AI Product Leaders

Shift competitive analysis from model capabilities to infrastructure advantages. Your customers care less about which model scores higher on benchmarks and more about which platform delivers consistent results at predictable costs. Focus product development on infrastructure-enabled features: faster inference, better security, lower costs.

Build partnerships with infrastructure providers, not just model providers. The winning AI products will integrate deeply with data streaming, edge compute, and compliance infrastructure. Strategic partnerships with platform companies now provide more sustainable advantage than exclusive model access.

Target regulated industries where infrastructure control drives buyer decisions. Financial services, healthcare, and government buyers increasingly require AI solutions with verifiable data sovereignty and compliance capabilities. These markets will pay premiums for infrastructure independence.

For Engineering Leaders

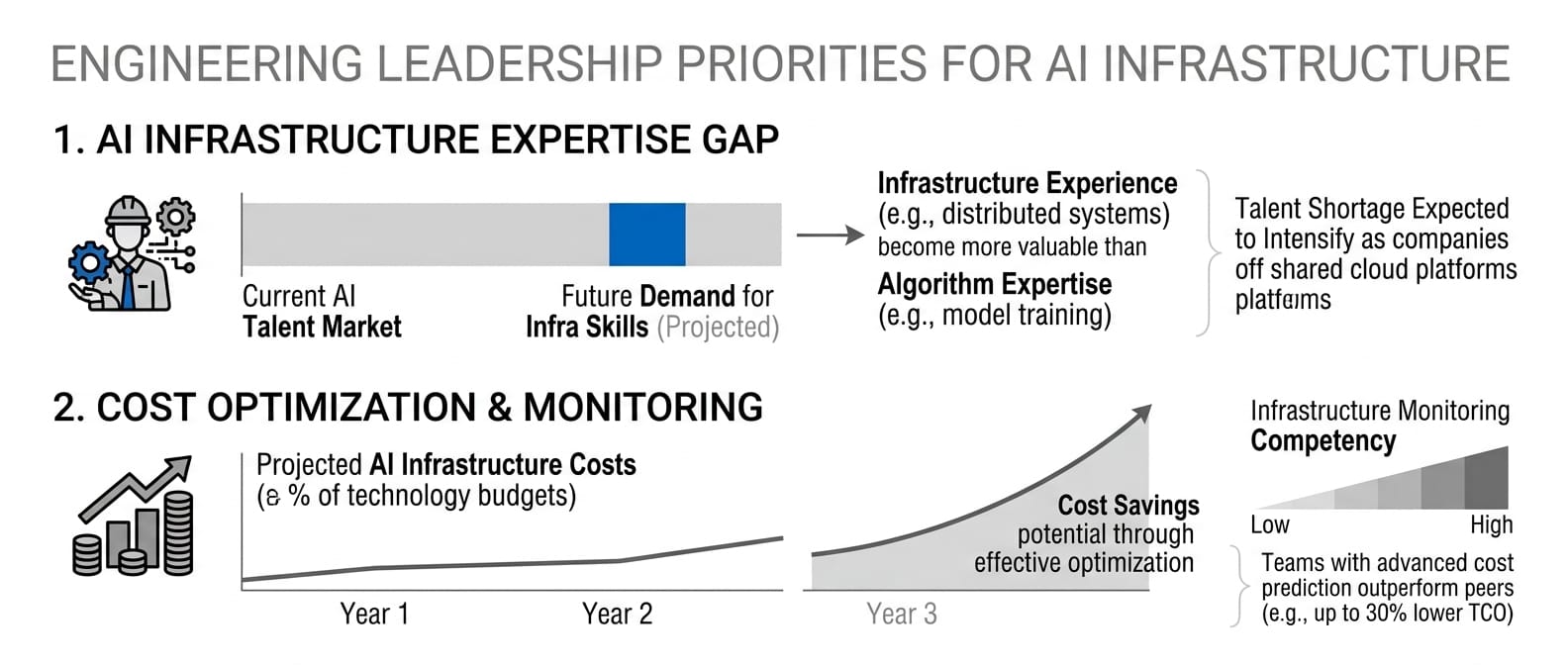

Develop internal capabilities for AI infrastructure management. The shortage of AI infrastructure expertise will intensify as companies move away from shared cloud platforms. Hire engineers with experience in distributed AI systems, not just model training. These skills become more valuable than algorithm expertise.

Design AI systems for infrastructure portability. Avoid vendor lock-in by building abstractions that enable migration between compute platforms. The ability to move AI workloads between providers will determine negotiating power and cost optimization potential.

Implement infrastructure monitoring and cost optimization as core competencies. AI infrastructure costs will dominate technology budgets. Teams that master cost prediction, utilization optimization, and performance monitoring will deliver competitive advantages that pure model improvements cannot match.

What We're Watching

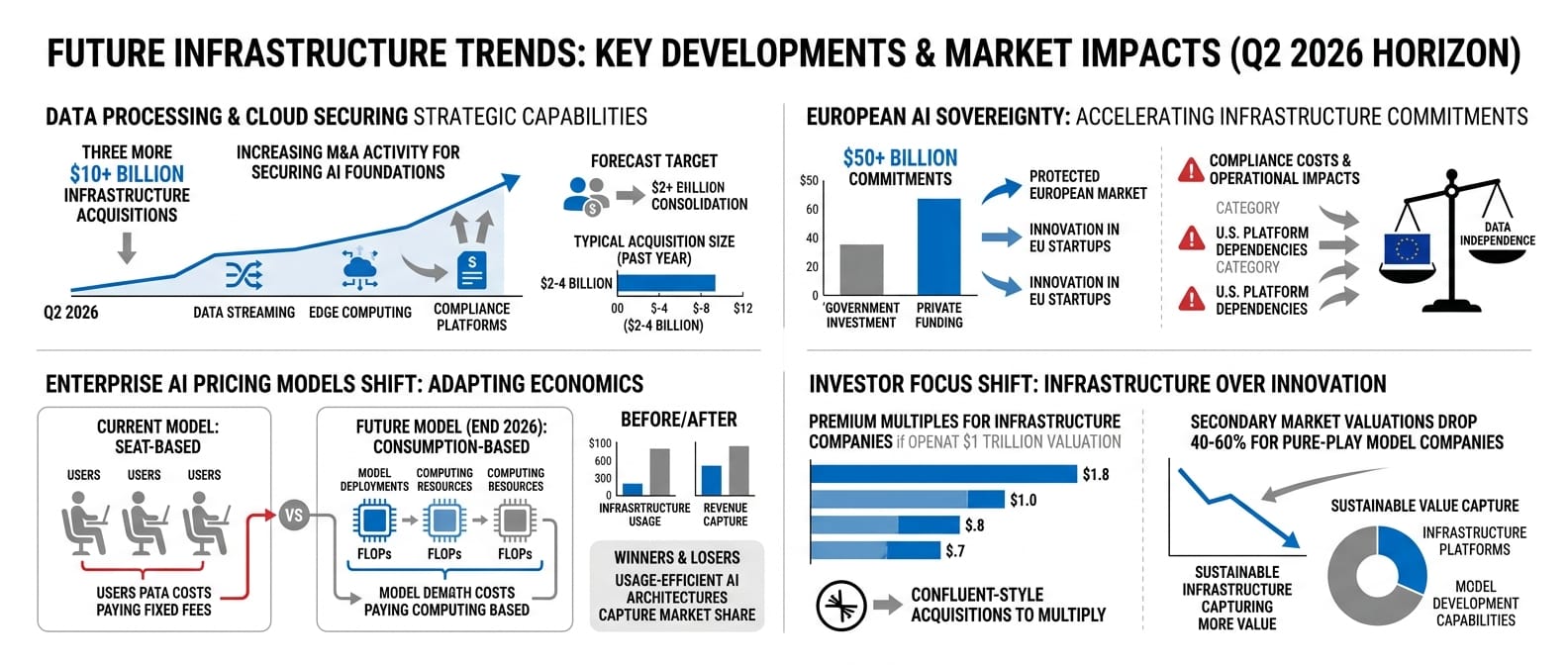

By Q2 2026, expect three more $10+ billion infrastructure acquisitions as cloud providers and enterprise software companies secure strategic data processing capabilities. The race to control AI infrastructure will drive consolidation across data streaming, edge computing, and compliance platforms.

European AI sovereignty accelerates with additional $50+ billion infrastructure commitments from EU governments and private investors. This creates a protected market for European AI companies while increasing compliance costs for U.S. platform dependencies.

Enterprise AI pricing models shift from seat-based to consumption-based by end of 2026 as infrastructure costs drive vendor economics. Companies with usage-efficient AI architectures will capture market share from those with expensive model deployments.

If OpenAI's IPO launches at its targeted trillion-dollar valuation, infrastructure companies will command premium multiples as public markets validate infrastructure over innovation. Expect confluent-style acquisitions to multiply.

Secondary market valuations for pure-play model companies drop 40-60% by year-end as investors recognize infrastructure platforms capture more sustainable value than model development capabilities.

The Bottom Line

March 26, 2026, marks the date when AI infrastructure became more valuable than AI intelligence. IBM's $11 billion bet on data streaming infrastructure, combined with OpenAI's retreat from compute-intensive consumer products, signals that the AI race now belongs to companies that control production systems, not just smart algorithms.

The enterprise software playbook is rewriting itself around infrastructure control. Companies betting everything on model superiority are fighting yesterday's war. The battlefield moved to data centers, regulatory compliance, and cost optimization. European AI sovereignty isn't about nationalism—it's about recognizing that infrastructure independence determines competitive survival.

By 2027, the AI winners won't be the companies with the smartest models. They'll be the companies with the most efficient, compliant, and controllable infrastructure. The great AI inversion is here. The question isn't whether your AI is intelligent enough—it's whether your infrastructure is strategic enough to survive what comes next.