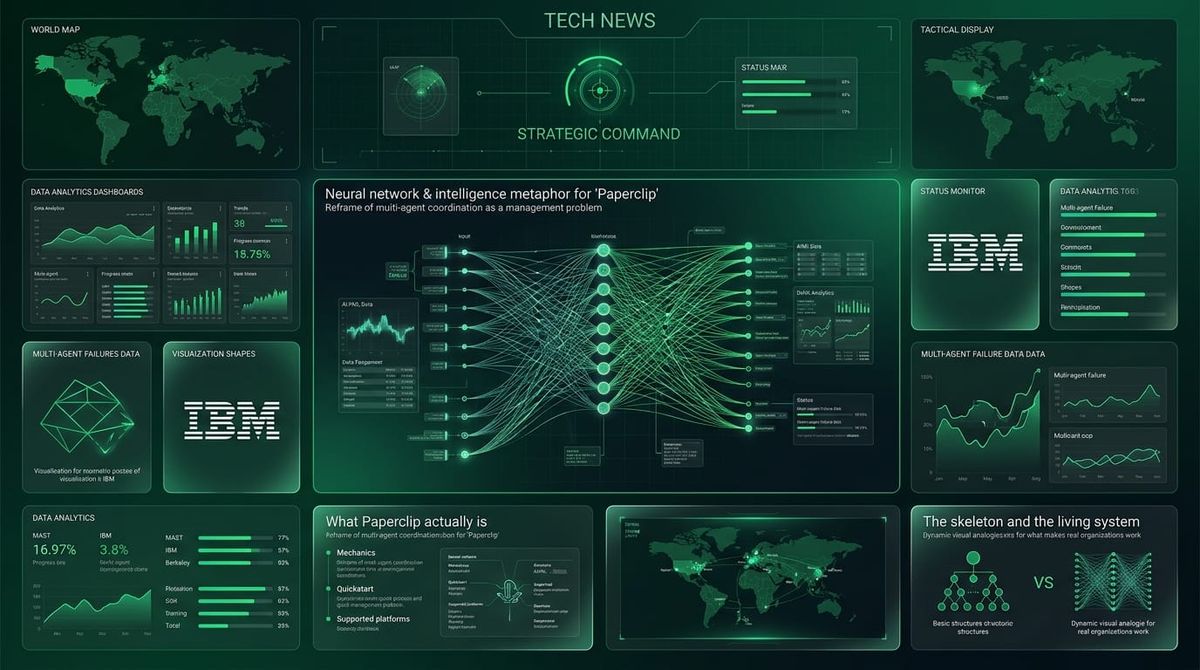

Paperclip Reframes Multi-Agent Coordination as a Management Problem

In this article:

- The reframe — Why the management-problem framing is the right move

- The problem is real and measurable — MAST, IBM, Berkeley, and what the failure data actually says

- What Paperclip actually is — The mechanics, the quickstart, what it runs on

- The skeleton and the living system — What Paperclip has vs. what makes real organisations work

- Too many degrees of freedom — Why flexibility is not the same as coordination

- Architecture choices worth noting — The non-obvious design decisions

- What it's not — Where the scope ends

- The unsolved problems — Supply chain risk and productivity theatre

- The sleeper feature — Why Clipmart matters more than the orchestration layer

- The concept has legs — The bet worth making regardless of who wins

The multi-agent coordination space has been trying to solve the same problem three different ways.

LangGraph made it a graph problem: define states, edges, and transitions. Clean for workflows where you can enumerate the paths. CrewAI made it a role problem: assign job titles, let agents collaborate by persona. n8n made it a workflow problem: nodes, triggers, pipelines. Visual and deterministic, but brittle at scale.

All three are technically correct abstractions. None of them is the abstraction that practitioners actually think in when they are running a team.

A project called Paperclip — open-source, MIT licensed, 55,000 GitHub stars since March 2026 — is trying a different framing entirely: multi-agent coordination is a management problem.

The name is deliberate. Nick Bostrom's paperclip maximizer is the canonical AI alignment thought experiment: an AI tasked with making paperclips converts all available matter into paperclips, including humans. The team building tooling for "zero-human companies" named themselves after that scenario. Either it's a joke or it's a statement. Probably both.

The concept deserves serious attention. The implementation is just getting started.

The reframe

Paperclip made it a management problem. Org charts, reporting lines, budgets, approval gates, goal ancestry, audit logs. These are primitives borrowed from how humans have solved coordination at scale for two centuries. Applying them to AI agents is not obvious. An org chart is a specific kind of graph. But it is an opinionated, semantically-loaded one that comes with centuries of R&D built in: spans of control, delegation protocols, accountability structures, budget governance. You do not have to invent those from scratch because generations of management science already worked them out.

That conceptual move matters. It is the kind of reframe that tends to look trivial in retrospect and visionary in advance.

The README frames it cleanly:

If OpenClaw is an employee, Paperclip is the company.

That single sentence does more explanatory work than most agent framework readmes do in a thousand words. It locates the product at a different layer of abstraction entirely.

The problem is real and measurable

The case for this abstraction is not theoretical. It is backed by production failure data.

The MAST study (IBM and UC Berkeley, March 2025, NeurIPS 2025) analysed 1,600+ annotated execution traces across seven popular multi-agent frameworks. The researchers identified 14 distinct failure modes across three categories: system design issues, inter-agent misalignment, and task verification failures. Cohen's kappa of 0.88 — strong inter-annotator agreement, not a rough taxonomy.

Separate analysis of production deployments found overall failure rates of 41% to 86.7% across studied traces, with coordination breakdowns accounting for roughly 37% and specification failures for another 42%. The failures are hard to diagnose because the signal is often a subtly wrong output, not an error. Systems that appear to be working are sometimes failing silently.

The mathematics of cascading failures make this worse. A ten-step agent chain with 90% per-step accuracy succeeds only 35% of the time. You probably recognise what this looks like in practice: pipelines that pass all unit tests and still produce nonsense in production.

| Multi-agent failure data | |

|---|---|

| MAST execution traces analysed | 1,600+ across 7 frameworks |

| Failure modes identified | 14 (3 categories) |

| Overall production failure rate | 41–86.7% |

| Coordination breakdowns | ~37% of failures |

| Specification failures | ~42% of failures |

| 10-step chain (90% per-step accuracy): success rate | 35% |

Paperclip is one of the first frameworks to treat coordination as the primary design problem, not a side effect to manage around. That positioning is earned by the data.

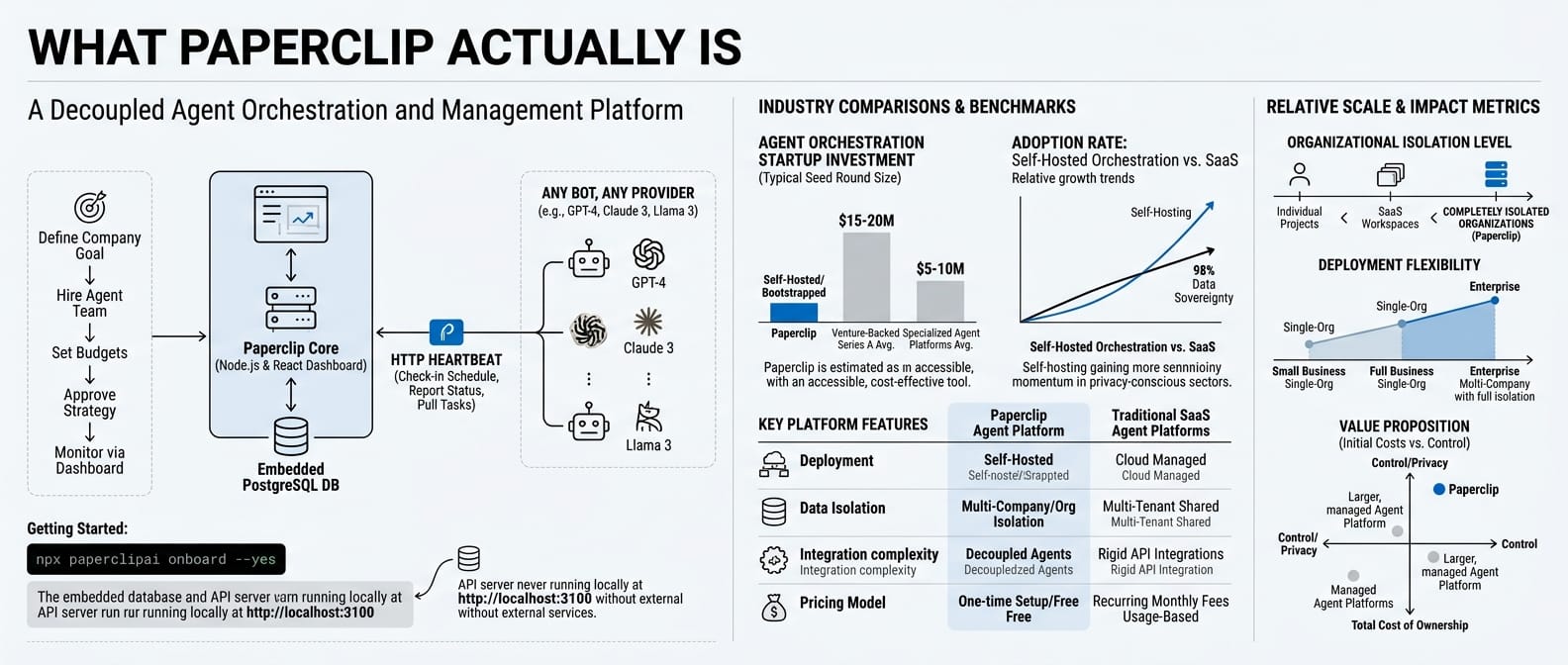

What Paperclip actually is

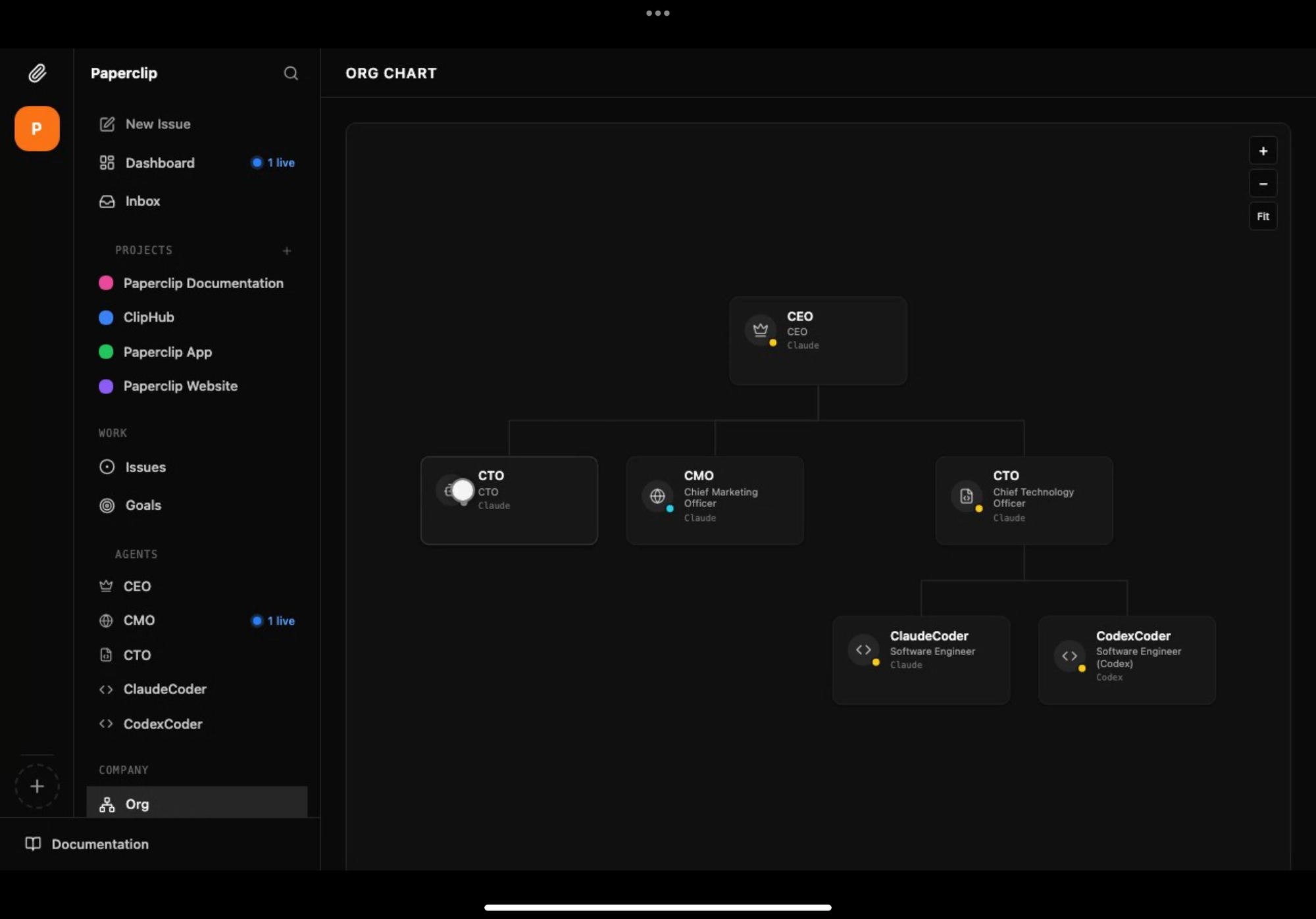

It is a Node.js server and React dashboard. You bring agents; Paperclip provides the organisational layer they work inside.

npx paperclipai onboard --yes

An embedded PostgreSQL database spins up automatically. The API server runs at http://localhost:3100. No external services required to get started.

The setup: define a company goal, hire your agent team (any bot, any provider), set budgets, approve strategy, and monitor from the dashboard. Agents connect via HTTP-based heartbeat: they check in on a schedule, report status, and pull the next task. This keeps agents decoupled from the server and lets you run agents written in any language or runtime.

Multi-company from a single deployment, complete data isolation between organisations.

The skeleton and the living system

Here is where the honest assessment has to diverge from the enthusiasm.

Paperclip has built the formal, visible parts of an organisation. The org chart. The reporting lines. The budget cap. The audit log. These are the skeleton. Real companies that coordinate at scale are not just skeletons. They have circulatory, nervous, and endocrine systems too. And most of those are not in Paperclip yet.

What is present and what is missing, mapped against organisational theory:

| Primitive | Status | What's missing |

|---|---|---|

| Structure and authority | Exists | Only fixed hierarchies. No matrix structures, cross-functional teams, or autonomous restructuring. |

| Goal alignment | Partial | Goal ancestry flows top-down. No mechanism for agents to propose new goals or surface opportunity signals upward. |

| Resource control | Exists | Budget caps only. No ROI tracking, performance-based budget reallocation, or capital allocation strategy. |

| Process standardisation | Partial | Skills are reusable. But no native SOPs for multi-actor long-running processes like procurement or incident response. |

| Monitoring | Exists | Audit logs are passive. No active KPIs, OKRs, or qualitative performance review to identify high/low performers. |

| Incentives | Missing | Agents have no mechanism to be rewarded for good work or penalised for poor work. They execute instructions. That is not how organisations stay efficient. |

| Conflict resolution | Minimal | Escalation only. No peer-level negotiation, mediation, or consensus protocols. |

| Informal governance | Missing | No constitutional principles, risk tolerances, or shared norms that shape agent behavior beyond explicit instructions. |

The incentive gap deserves emphasis. The classic principal-agent problem in organisational economics is that agents optimise for what they are rewarded for, not for what the principal actually wants. In Paperclip's current model, agents have no reward signal at all. They are pure instruction-followers. That sidesteps the misalignment problem but also sidesteps any possibility of agents improving, adapting, or compounding value over time.

Real organisations do not just give people jobs and budgets. They give people reasons to care.

Too many degrees of freedom

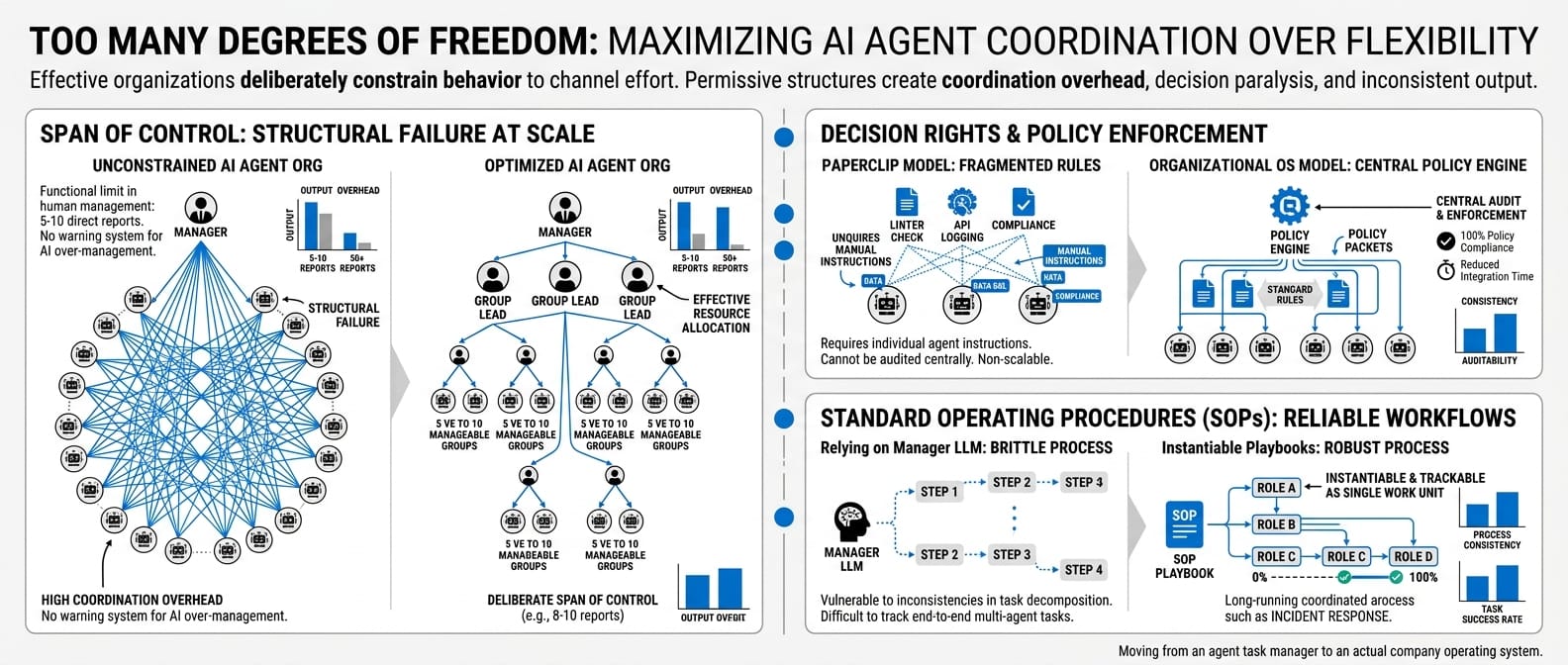

Maximum flexibility is not the same as maximum coordination. Effective organisations constrain behaviour deliberately to channel effort. Permissive structures produce coordination overhead, decision paralysis, and inconsistent output.

Three specific gaps in Paperclip's constraint model:

Span of control. In Paperclip, a manager agent can be assigned any number of subordinates. In human management practice, the functional limit is roughly five to ten direct reports before coordination overhead exceeds the output. A Paperclip org chart with a manager overseeing fifty agents does not just run slowly — it structurally fails the same way a human team with one manager and fifty reports fails. The system does not warn against this.

Decision rights and policy. The model is manager approves, subordinate executes. Complex work requires finer-grained assignment of who is responsible for what, who must be consulted, and who just needs to be informed. There is no central policy engine to enforce rules like "all generated code must pass this linter" or "all external API calls must be logged to the compliance store." Those rules have to be manually embedded in every relevant agent's instructions, which does not scale and cannot be audited centrally.

Standard operating procedures. Paperclip manages discrete tasks decomposed by a manager agent. Real companies run on complex, multi-actor, long-running processes: incident response, procurement, client onboarding. Relying on a manager LLM to perfectly decompose these every time is brittle. There is no native concept of a playbook that can be instantiated, assigned across multiple agents with different roles, and tracked as a single unit of work.

These are not minor feature gaps. They are the difference between an agent task manager and an actual company operating system.

Architecture choices worth noting

Within those constraints, several design decisions are genuinely non-obvious and worth examining.

Task checkout is atomic. Two agents cannot pick up the same task. Harder to get right than it sounds. Most ad-hoc multi-agent setups do not bother.

Goal ancestry flows down. Every task carries a reference to the company goal it serves. Agents see the "why," not just the title. This is what separates a managed agent from a prompt with a job description.

Skills are injected at runtime. Agents can learn Paperclip-specific workflows without retraining. You can update how the agent team operates without touching model weights.

Persistent agent state. Agents resume the same task context across heartbeats. Sessions survive reboots because tasks are ticket-based and context flows from task through project to company goal.

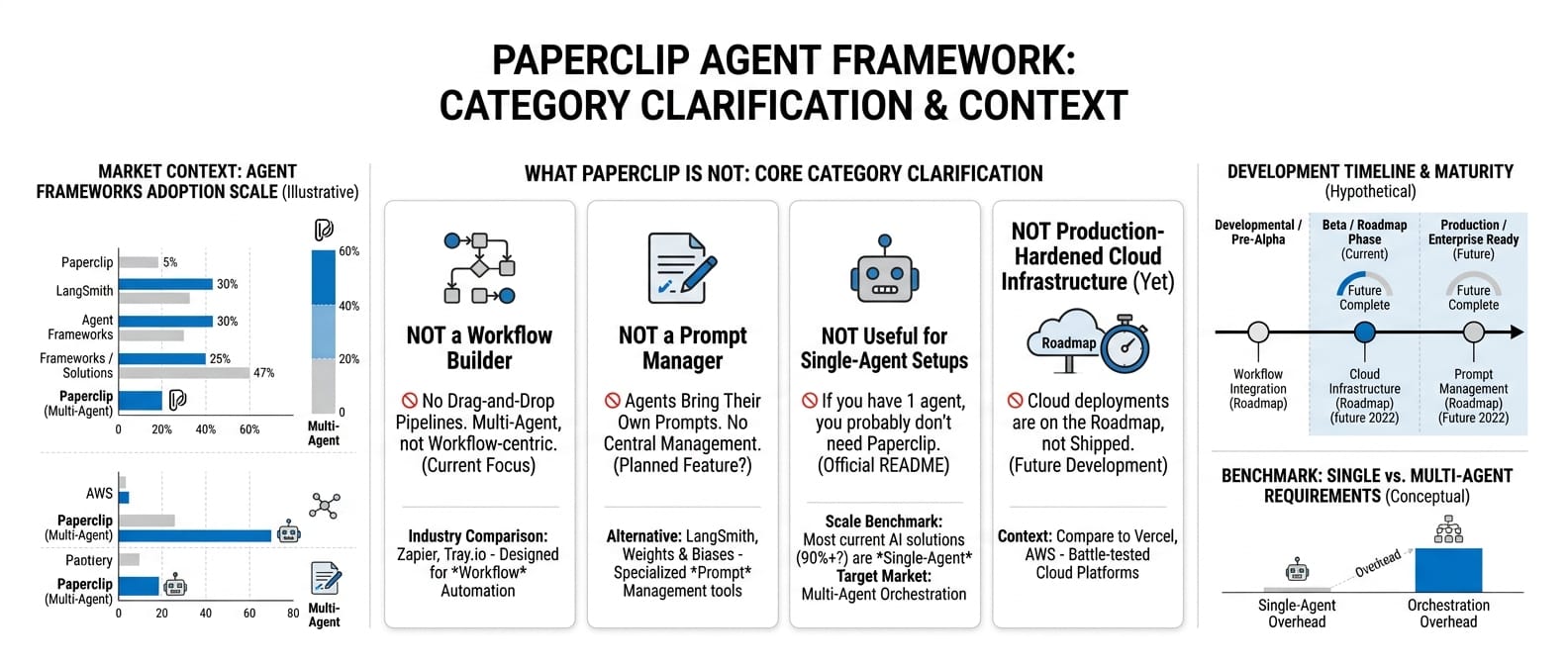

What it's not

Worth being specific because the category is noisy.

Not a workflow builder. No drag-and-drop pipelines. Not a prompt manager. Agents bring their own prompts. Not useful for a single-agent setup — the README says it directly: "If you have one agent, you probably don't need Paperclip. If you have twenty, you definitely do." Not production-hardened cloud infrastructure yet. Cloud deployments are on the roadmap, not shipped.

The unsolved problems

Supply chain risk. Third-party agent skills currently run with full filesystem and network access. That is npm's supply chain problem with a larger blast radius. A malicious skill package can do more damage inside an agentic workflow than a malicious npm package in a build pipeline. Tractable, but not solved yet.

Productivity theatre. Agents hiring agents to produce decks about fake companies. The framework does not prevent real output; it does not guarantee it either. Org charts and budgets are necessary conditions for coordination. They are not sufficient conditions for useful work. You still have to define good goals, hire capable agents, and know what done looks like.

Digital bureaucracy. This one is less discussed but follows directly from the management model. Human organisations suffer from bottleneck managers, slow approval chains, information silos, and quadratically scaling communication overhead as the org grows. Paperclip inherits these failure modes along with the primitives it borrows. A manager agent that is slow, expensive, or offline is a single point of failure for its entire sub-tree. This is not a criticism of the approach — it is a known property of hierarchical structures. The question is whether the implementation develops the mitigation mechanisms that real organisations use.

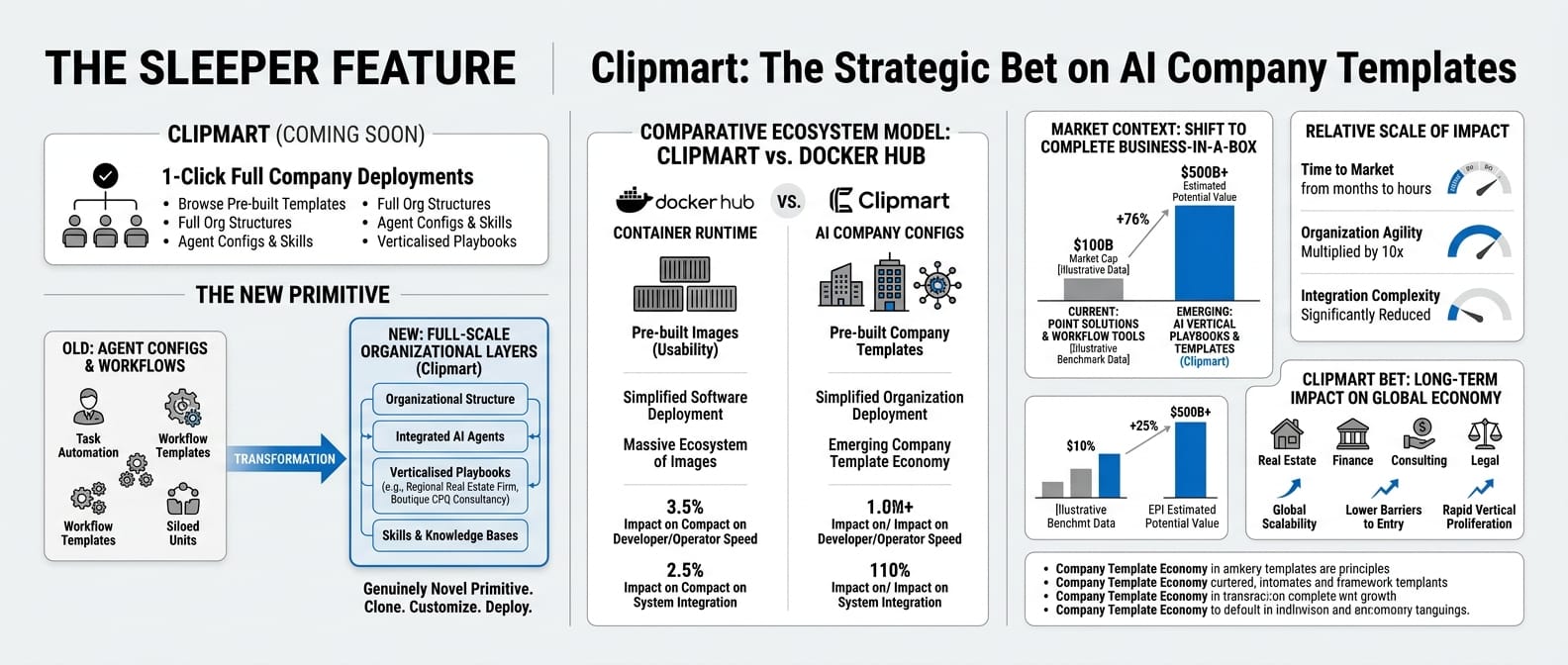

The sleeper feature

Everything above is about the orchestration layer. The most interesting item in the roadmap is marked "COMING SOON":

Clipmart — Download and run entire companies with one click. Browse pre-built company templates, full org structures, agent configs, and skills, and import them into your Paperclip instance in seconds.

If that marketplace matures, you get a company template economy. Not a workflow template. Not an agent config. A verticalised playbook for "run a boutique CPQ consultancy" or "operate a regional real estate research firm" that you clone, customise, and deploy.

The closest analogy is Docker Hub: the container runtime matters, but the ecosystem of pre-built images is what makes it usable. Clipmart is the bet that the same pattern holds for AI company configurations. That is a genuinely novel primitive, and it does not exist anywhere else in the space today.

The concept has legs

For teams building in the vertical AI space, Paperclip is not competition. It is the layer above. Paperclip is the organisational control plane. Vertical agent skills are the execution primitives. A mature stack plausibly looks like Paperclip at the org layer, specialised vertical skills as workers, Claude Code or OpenClaw as the runtime.

The honest assessment is that Paperclip is early: the concept is right, the implementation is incomplete, and the hardest parts — incentive structures, dynamic reorganisation, policy governance, span-of-control constraints — are not built yet. What makes the project worth watching is that it is the first tool to ask the right question. The multi-agent coordination problem is a management problem. Decades of organisational theory have answers to it. Applying those answers to agent systems is non-trivial work, but it is tractable work with a known direction.

Whether Paperclip specifically builds those layers, or whether it inspires a more complete successor, is a separate question. The concept has legs regardless.

Start with npx paperclipai onboard --yes. The docs cover the first setup. The Discord is the fastest read on where the community is taking it.

Sources

- paperclipai/paperclip — GitHub repo (MIT, TypeScript, April 2026)

- MAST: Why Do Multi-Agent LLM Systems Fail? — IBM and UC Berkeley, March 2025. NeurIPS 2025. 1,600+ annotated traces, 14 failure modes.

- Multi-agent production failure analysis — coordination and specification failure breakdowns

- Rethinking AI Agents: A Principal-Agent Perspective — California Management Review, July 2025

- Gartner: 40%+ of agentic AI projects projected to be cancelled by end of 2027